2025 |

|

| SPACE: 3D Spatial Co-operation and Exploration Framework for Robust Mapping and Coverage with Multi-Robot Systems Journal Article IEEE Robotics and Automation Letters, 10 (12), pp. 13074–13081, 2025. Abstract | Links | BibTeX | Tags: cooperation, multi-robot systems, perception @article{Ghanta2025, title = {SPACE: 3D Spatial Co-operation and Exploration Framework for Robust Mapping and Coverage with Multi-Robot Systems}, author = {Sai Krishna Ghanta and Ramviyas Parasuraman}, url = {https://ieeexplore.ieee.org/document/11222879}, doi = {10.1109/LRA. 2025.3627118}, year = {2025}, date = {2025-12-12}, journal = {IEEE Robotics and Automation Letters}, volume = {10}, number = {12}, pages = {13074–13081}, abstract = {In indoor environments, multi-robot visual (RGB-D) mapping and exploration hold immense potential for application in domains such as domestic service and logistics, where deploying multiple robots in the same environment can significantly enhance efficiency. However, there are two primary challenges: (1) the “ghosting trail” effect, which occurs when inter-robot views overlap, producing temporally inconsistent, duplicated surfaces that degrade point-cloud reconstruction accuracy, and (2) the oversight of visual reconstructions in selecting the most effective frontiers for exploration. Given these challenges are interrelated, we address them together by proposing a new semi-distributed framework (SPACE) for spatial cooperation in indoor environments that enables enhanced coverage and 3D mapping. SPACE leverages geometric techniques, including “mutual awareness” and a “dynamic robot filter,” to overcome spatial mapping constraints. Additionally, we introduce a novel spatial frontier detection system and map merger, integrated with an adaptive frontier assigner for optimal coverage balancing the exploration and reconstruction objectives. In extensive ROS-Gazebo simulations and real-world experiments, SPACE demonstrated superior performance over state-of-the-art approaches in both exploration and mapping metrics, demonstrating significant mitigation of the ghosting effects by multiple magnitudes.}, keywords = {cooperation, multi-robot systems, perception}, pubstate = {published}, tppubtype = {article} } In indoor environments, multi-robot visual (RGB-D) mapping and exploration hold immense potential for application in domains such as domestic service and logistics, where deploying multiple robots in the same environment can significantly enhance efficiency. However, there are two primary challenges: (1) the “ghosting trail” effect, which occurs when inter-robot views overlap, producing temporally inconsistent, duplicated surfaces that degrade point-cloud reconstruction accuracy, and (2) the oversight of visual reconstructions in selecting the most effective frontiers for exploration. Given these challenges are interrelated, we address them together by proposing a new semi-distributed framework (SPACE) for spatial cooperation in indoor environments that enables enhanced coverage and 3D mapping. SPACE leverages geometric techniques, including “mutual awareness” and a “dynamic robot filter,” to overcome spatial mapping constraints. Additionally, we introduce a novel spatial frontier detection system and map merger, integrated with an adaptive frontier assigner for optimal coverage balancing the exploration and reconstruction objectives. In extensive ROS-Gazebo simulations and real-world experiments, SPACE demonstrated superior performance over state-of-the-art approaches in both exploration and mapping metrics, demonstrating significant mitigation of the ghosting effects by multiple magnitudes. |

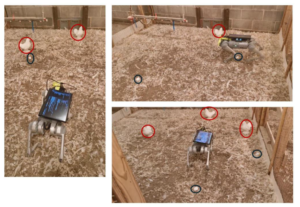

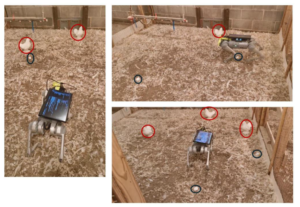

| Autonomous Navigation of a Quadruped Robot to Approach Floor Eggs and Path Optimization Analysis for Commercial Feasibility Journal Article American Society of Agricultural and Biological Engineers, 41 (6), pp. 733-747, 2025. Abstract | Links | BibTeX | Tags: autonomy, control, navigation, perception, planning @article{Mandiga2025, title = {Autonomous Navigation of a Quadruped Robot to Approach Floor Eggs and Path Optimization Analysis for Commercial Feasibility}, author = {Aravind Mandiga, Guoming Li, Tianming Liu, Ramviyas Parasuraman, Ramana M Pidaparti, Venkat UC Bodempudi, and Samuel E Aggrey}, url = {https://elibrary.asabe.org/abstract.asp?AID=55713}, doi = {10.13031/aea.16384}, year = {2025}, date = {2025-01-01}, journal = {American Society of Agricultural and Biological Engineers}, volume = {41}, number = {6}, pages = {733-747}, abstract = { Floor eggs (i.e., eggs laid on the litter floor) are a major problem in cage-free hen systems and account for approximately 5% to 6% of daily egg production. Floor eggs may be contaminated and pecked by birds, which can induce egg eating, degradation of egg quality, and risk of additional floor eggs if not collected in a timely manner. Currently, floor eggs require time-consuming manual collection in daily flock inspection. The objective was to develop autonomous navigation for a quadruped robot to approach floor eggs and to evaluate commercial feasibility through optimized routing strategies. The robot was equipped with an RGB-Depth camera for object detection and depth estimation, and multiple deep learning object detection models were evaluated. Mathematical operations associated with imagery coordinates are converted to real-world trajectories for robot movement controls. The robot was tested at speeds of 0.27, 0.34, 0.41, 0.52, and 0.68 m/s to approach floor eggs. Results show the model successfully localizes floor eggs and hens with over 95% precision, recall, and mAP50(B). The robot approaches floor eggs with an average accuracy of 90%. Commercial feasibility was assessed through mathematical optimization analysis using boustrophedon cellular decomposition for two payload scenarios (50 and 77 eggs) in a typical 50,000-hen facility (380 x 18.2 m). Optimization analysis demonstrated operational viability with total daily travel distances of 10.7 km (50-egg payload) and 7.8 km (77-egg payload) for seven daily charge cycles, successfully transferring 2,000 floor eggs to the conveyor belts. These findings show great potential for quadruped robot navigation and commercial implementation for floor egg collection.}, keywords = {autonomy, control, navigation, perception, planning}, pubstate = {published}, tppubtype = {article} } Floor eggs (i.e., eggs laid on the litter floor) are a major problem in cage-free hen systems and account for approximately 5% to 6% of daily egg production. Floor eggs may be contaminated and pecked by birds, which can induce egg eating, degradation of egg quality, and risk of additional floor eggs if not collected in a timely manner. Currently, floor eggs require time-consuming manual collection in daily flock inspection. The objective was to develop autonomous navigation for a quadruped robot to approach floor eggs and to evaluate commercial feasibility through optimized routing strategies. The robot was equipped with an RGB-Depth camera for object detection and depth estimation, and multiple deep learning object detection models were evaluated. Mathematical operations associated with imagery coordinates are converted to real-world trajectories for robot movement controls. The robot was tested at speeds of 0.27, 0.34, 0.41, 0.52, and 0.68 m/s to approach floor eggs. Results show the model successfully localizes floor eggs and hens with over 95% precision, recall, and mAP50(B). The robot approaches floor eggs with an average accuracy of 90%. Commercial feasibility was assessed through mathematical optimization analysis using boustrophedon cellular decomposition for two payload scenarios (50 and 77 eggs) in a typical 50,000-hen facility (380 x 18.2 m). Optimization analysis demonstrated operational viability with total daily travel distances of 10.7 km (50-egg payload) and 7.8 km (77-egg payload) for seven daily charge cycles, successfully transferring 2,000 floor eggs to the conveyor belts. These findings show great potential for quadruped robot navigation and commercial implementation for floor egg collection. |

| 2025 IEEE International Symposium on Multi-Robot and Multi-Agent Systems (MRS), 2025. Abstract | Links | BibTeX | Tags: learning, localization, mapping, multi-robot systems, perception @conference{Ghanta2025c, title = {Policies over Poses: Reinforcement Learning based Distributed Pose-Graph Optimization for Multi-Robot SLAM}, author = {Sai Krishna Ghanta and Ramviyas Parasuraman}, url = {https://ieeexplore.ieee.org/document/11357260}, doi = {10.1109/MRS66243.2025.11357260}, year = {2025}, date = {2025-12-04}, booktitle = {2025 IEEE International Symposium on Multi-Robot and Multi-Agent Systems (MRS)}, abstract = {We consider the distributed pose-graph optimization (PGO) problem, which is fundamental in accurate trajectory estimation in multi-robot simultaneous localization and mapping (SLAM). Conventional iterative approaches linearize a highly non-convex optimization objective, requiring repeated solving of normal equations, which often converge to local minima and thus produce suboptimal estimates. We propose a scalable, outlier-robust distributed planar PGO framework using Multi-Agent Reinforcement Learning (MARL). We cast distributed PGO as a partially observable Markov game defined on local pose-graphs, where each action refines a single edge's pose estimate. A graph partitioner decomposes the global pose graph, and each robot runs a recurrent edge-conditioned Graph Neural Network (GNN) encoder with adaptive edge-gating to denoise noisy edges. Robots sequentially refine poses through a hybrid policy that utilizes prior action memory and graph embeddings. After local graph correction, a consensus scheme reconciles inter-robot disagreements to produce a globally consistent estimate. Our extensive evaluations on a comprehensive suite of synthetic and real-world datasets demonstrate that our learned MARL-based actors reduce the global objective by an average of 37.5% more than the state-of-the-art distributed PGO framework, while enhancing inference efficiency by at least 6X. We also demonstrate that actor replication allows a single learned policy to scale effortlessly to substantially larger robot teams without any retraining. Code is publicly available at https://github.com/herolab-uga/policies-over-poses }, keywords = {learning, localization, mapping, multi-robot systems, perception}, pubstate = {published}, tppubtype = {conference} } We consider the distributed pose-graph optimization (PGO) problem, which is fundamental in accurate trajectory estimation in multi-robot simultaneous localization and mapping (SLAM). Conventional iterative approaches linearize a highly non-convex optimization objective, requiring repeated solving of normal equations, which often converge to local minima and thus produce suboptimal estimates. We propose a scalable, outlier-robust distributed planar PGO framework using Multi-Agent Reinforcement Learning (MARL). We cast distributed PGO as a partially observable Markov game defined on local pose-graphs, where each action refines a single edge's pose estimate. A graph partitioner decomposes the global pose graph, and each robot runs a recurrent edge-conditioned Graph Neural Network (GNN) encoder with adaptive edge-gating to denoise noisy edges. Robots sequentially refine poses through a hybrid policy that utilizes prior action memory and graph embeddings. After local graph correction, a consensus scheme reconciles inter-robot disagreements to produce a globally consistent estimate. Our extensive evaluations on a comprehensive suite of synthetic and real-world datasets demonstrate that our learned MARL-based actors reduce the global objective by an average of 37.5% more than the state-of-the-art distributed PGO framework, while enhancing inference efficiency by at least 6X. We also demonstrate that actor replication allows a single learned policy to scale effortlessly to substantially larger robot teams without any retraining. Code is publicly available at https://github.com/herolab-uga/policies-over-poses |

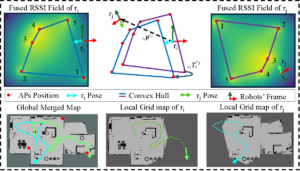

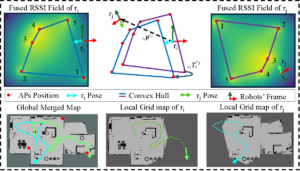

| 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025. Abstract | Links | BibTeX | Tags: cooperation, localization, multi-robot systems, networking, perception @conference{Ghanta2025b, title = {MGPRL: Distributed Multi-Gaussian Processes for Wi-Fi-based Multi-Robot Relative Localization in Large Indoor Environments}, author = {Sai Krishna Ghanta and Ramviyas Parasuraman}, url = {https://ieeexplore.ieee.org/document/11247180}, doi = {10.1109/IROS60139.2025.11247180}, year = {2025}, date = {2025-10-19}, booktitle = {2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)}, abstract = {Relative localization is a crucial capability for multi-robot systems operating in GPS-denied environments. Existing approaches for multi-robot relative localization often depend on costly or short-range sensors like cameras and LiDARs. Consequently, these approaches face challenges such as high computational overhead (e.g., map merging) and difficulties in disjoint environments. To address this limitation, this paper introduces MGPRL, a novel distributed framework for multi-robot relative localization using convex-hull of multiple Wi-Fi access points (AP). To accomplish this, we employ co-regionalized multi-output Gaussian Processes for efficient Radio Signal Strength Indicator (RSSI) field prediction and perform uncertainty-aware multi-AP localization, which is further coupled with weighted convex hull-based alignment for robust relative pose estimation. Each robot predicts the RSSI field of the environment by an online scan of APs in its environment, which are utilized for position estimation of multiple APs. To perform relative localization, each robot aligns the convex hull of its predicted AP locations with that of the neighbor robots. This approach is well-suited for devices with limited computational resources and operates solely on widely available Wi-Fi RSSI measurements without necessitating any dedicated pre-calibration or offline fingerprinting. We rigorously evaluate the performance of the proposed MGPRL in ROS simulations and demonstrate it with real-world experiments, comparing it against multiple state-of-the-art approaches. The results showcase that MGPRL outperforms existing methods in terms of localization accuracy and computational efficiency.}, keywords = {cooperation, localization, multi-robot systems, networking, perception}, pubstate = {published}, tppubtype = {conference} } Relative localization is a crucial capability for multi-robot systems operating in GPS-denied environments. Existing approaches for multi-robot relative localization often depend on costly or short-range sensors like cameras and LiDARs. Consequently, these approaches face challenges such as high computational overhead (e.g., map merging) and difficulties in disjoint environments. To address this limitation, this paper introduces MGPRL, a novel distributed framework for multi-robot relative localization using convex-hull of multiple Wi-Fi access points (AP). To accomplish this, we employ co-regionalized multi-output Gaussian Processes for efficient Radio Signal Strength Indicator (RSSI) field prediction and perform uncertainty-aware multi-AP localization, which is further coupled with weighted convex hull-based alignment for robust relative pose estimation. Each robot predicts the RSSI field of the environment by an online scan of APs in its environment, which are utilized for position estimation of multiple APs. To perform relative localization, each robot aligns the convex hull of its predicted AP locations with that of the neighbor robots. This approach is well-suited for devices with limited computational resources and operates solely on widely available Wi-Fi RSSI measurements without necessitating any dedicated pre-calibration or offline fingerprinting. We rigorously evaluate the performance of the proposed MGPRL in ROS simulations and demonstrate it with real-world experiments, comparing it against multiple state-of-the-art approaches. The results showcase that MGPRL outperforms existing methods in terms of localization accuracy and computational efficiency. |

| IEEE ICRA 2025 Workshop on Block by Block Collaborative Strategies for Multi-agent Robotic Construction, 2025. Abstract | Links | BibTeX | Tags: mapping, multi-robot systems, perception, planning @workshop{Ghanta2025d, title = {SPACE: 3D Spatial Co-operation and Exploration Framework for Robust Mapping and Coverage with Multi-Robot Systems}, author = {Sai Krishna Ghanta and Ramviyas Parasuraman}, url = {https://cearlab.github.io/blockbyblock.github.io/index.html}, year = {2025}, date = {2025-05-19}, booktitle = {IEEE ICRA 2025 Workshop on Block by Block Collaborative Strategies for Multi-agent Robotic Construction}, abstract = {Multi-robot systems hold promise for accelerating cooperative construction tasks such as site preparation and modular assembly. However, dynamic inter-robot occlusions in 3D point-cloud mapping introduce ghosting artifacts that compromise surface reconstruction accuracy and impede downstream planning for grading and leveling. Furthermore, traditional 2D grid-based frontier approaches fail to capture volumetric nuances in partially reconstructed areas, limiting exploration. We propose SPACE, a semi-distributed framework that (1) employs geometric mutual-awareness coupled with image-plane clustering to suppress dynamic robot artifacts, and (2) introduces a bi-variate frontier detection and assignment scheme that classifies and prioritizes both unexplored and weakly mapped regions. SPACE achieves up to 99% reduction in ghosting volume and 95% exploration coverage in ROS-Gazebo experiments and real-world experiments. }, keywords = {mapping, multi-robot systems, perception, planning}, pubstate = {published}, tppubtype = {workshop} } Multi-robot systems hold promise for accelerating cooperative construction tasks such as site preparation and modular assembly. However, dynamic inter-robot occlusions in 3D point-cloud mapping introduce ghosting artifacts that compromise surface reconstruction accuracy and impede downstream planning for grading and leveling. Furthermore, traditional 2D grid-based frontier approaches fail to capture volumetric nuances in partially reconstructed areas, limiting exploration. We propose SPACE, a semi-distributed framework that (1) employs geometric mutual-awareness coupled with image-plane clustering to suppress dynamic robot artifacts, and (2) introduces a bi-variate frontier detection and assignment scheme that classifies and prioritizes both unexplored and weakly mapped regions. SPACE achieves up to 99% reduction in ghosting volume and 95% exploration coverage in ROS-Gazebo experiments and real-world experiments. |

2024 |

|

| 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2024), 2024. Abstract | Links | BibTeX | Tags: learning, mapping, perception @conference{Ravipati2024, title = {Object-Oriented Material Classification and 3D Clustering for Improved Semantic Perception and Mapping in Mobile Robots}, author = {Siva Krishna Ravipati and Ehsan Latif and Suchendra Bhandarkar and Ramviyas Parasuraman }, url = {https://ieeexplore.ieee.org/document/10801936}, doi = {10.1109/IROS58592.2024.10801936}, year = {2024}, date = {2024-10-13}, booktitle = {2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2024)}, pages = {9729-9736}, abstract = {Classification of different object surface material types can play a significant role in the decision-making algorithms for mobile robots and autonomous vehicles. RGB-based scene-level semantic segmentation has been well-addressed in the literature. However, improving material recognition using the depth modality and its integration with SLAM algorithms for 3D semantic mapping could unlock new potential benefits in the robotics perception pipeline. To this end, we propose a complementarity-aware deep learning approach for RGB-D-based material classification built on top of an object-oriented pipeline. The approach further integrates the ORB-SLAM2 method for 3D scene mapping with multiscale clustering of the detected material semantics in the point cloud map generated by the visual SLAM algorithm. Extensive experimental results with existing public datasets and newly contributed real-world robot datasets demonstrate a significant improvement in material classification and 3D clustering accuracy compared to state-of-the-art approaches for 3D semantic scene mapping. }, keywords = {learning, mapping, perception}, pubstate = {published}, tppubtype = {conference} } Classification of different object surface material types can play a significant role in the decision-making algorithms for mobile robots and autonomous vehicles. RGB-based scene-level semantic segmentation has been well-addressed in the literature. However, improving material recognition using the depth modality and its integration with SLAM algorithms for 3D semantic mapping could unlock new potential benefits in the robotics perception pipeline. To this end, we propose a complementarity-aware deep learning approach for RGB-D-based material classification built on top of an object-oriented pipeline. The approach further integrates the ORB-SLAM2 method for 3D scene mapping with multiscale clustering of the detected material semantics in the point cloud map generated by the visual SLAM algorithm. Extensive experimental results with existing public datasets and newly contributed real-world robot datasets demonstrate a significant improvement in material classification and 3D clustering accuracy compared to state-of-the-art approaches for 3D semantic scene mapping. |

2020 |

|

| Material Mapping in Unknown Environments using Tapping Sound Conference 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2020), 2020. Abstract | BibTeX | Tags: mapping, perception, robotics @conference{Kannan2020, title = {Material Mapping in Unknown Environments using Tapping Sound}, author = {Shyam Sundar Kannan and Wonse Jo and Ramviyas Parasuramanoiuytrewq and Byung-Cheol Min}, year = {2020}, date = {2020-10-29}, booktitle = {2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2020)}, abstract = {In this paper, we propose an autonomous exploration and tapping mechanism-based material mapping system for a mobile robot in unknown environments. The proposed system integrates SLAM modules and sound-based material classification to enable a mobile robot to explore an unknown environment autonomously and at the same time identify the various objects and materials in the environment in an efficient manner, creating a material map which localizes the various materials in the environment over the occupancy grid. A tapping mechanism and tapping audio signal processing based on machine learning techniques are exploited for a robot to identify the objects and materials. We demonstrate the proposed system through experiments using a mobile robot platform installed with Velodyne LiDAR, a linear solenoid, and microphones in an exploration-like scenario with various materials. Experiment results demonstrate that the proposed system can create useful material maps in unknown environments.}, keywords = {mapping, perception, robotics}, pubstate = {published}, tppubtype = {conference} } In this paper, we propose an autonomous exploration and tapping mechanism-based material mapping system for a mobile robot in unknown environments. The proposed system integrates SLAM modules and sound-based material classification to enable a mobile robot to explore an unknown environment autonomously and at the same time identify the various objects and materials in the environment in an efficient manner, creating a material map which localizes the various materials in the environment over the occupancy grid. A tapping mechanism and tapping audio signal processing based on machine learning techniques are exploited for a robot to identify the objects and materials. We demonstrate the proposed system through experiments using a mobile robot platform installed with Velodyne LiDAR, a linear solenoid, and microphones in an exploration-like scenario with various materials. Experiment results demonstrate that the proposed system can create useful material maps in unknown environments. |

2019 |

|

| Wisture: Touch-less Hand Gesture Classification in Unmodified Smartphones Using Wi-Fi Signals Journal Article IEEE Sensors Journal, 19 (1), pp. 257-267, 2019. Abstract | Links | BibTeX | Tags: networking, perception, robotics @article{Haseeb2018, title = {Wisture: Touch-less Hand Gesture Classification in Unmodified Smartphones Using Wi-Fi Signals}, author = {Mohamed Haseeb and Ramviyas Parasuraman}, url = {https://ieeexplore.ieee.org/document/8493572}, doi = {10.1109/JSEN.2018.2876448}, year = {2019}, date = {2019-01-01}, journal = { IEEE Sensors Journal}, volume = {19}, number = {1}, pages = {257-267}, abstract = {This paper introduces Wisture, a new online machine learning solution for recognizing touch-less dynamic hand gestures on a smartphone. Wisture relies on the standard Wi-Fi Received Signal Strength (RSS) using a Long Short-Term Memory (LSTM) Recurrent Neural Network (RNN), thresholding filters and traffic induction. Unlike other Wi-Fi based gesture recognition methods, the proposed method does not require a modification of the smartphone hardware or the operating system, and performs the gesture recognition without interfering with the normal operation of other smartphone applications. We discuss the characteristics of Wisture, and conduct extensive experiments to compare its performance against state-of-the-art machine learning solutions in terms of both accuracy and time efficiency. The experiments include a set of different scenarios in terms of both spatial setup and traffic between the smartphone and Wi-Fi access points (AP). The results show that Wisture achieves an online recognition accuracy of up to 94% (average 78%) in detecting and classifying three hand gestures.}, keywords = {networking, perception, robotics}, pubstate = {published}, tppubtype = {article} } This paper introduces Wisture, a new online machine learning solution for recognizing touch-less dynamic hand gestures on a smartphone. Wisture relies on the standard Wi-Fi Received Signal Strength (RSS) using a Long Short-Term Memory (LSTM) Recurrent Neural Network (RNN), thresholding filters and traffic induction. Unlike other Wi-Fi based gesture recognition methods, the proposed method does not require a modification of the smartphone hardware or the operating system, and performs the gesture recognition without interfering with the normal operation of other smartphone applications. We discuss the characteristics of Wisture, and conduct extensive experiments to compare its performance against state-of-the-art machine learning solutions in terms of both accuracy and time efficiency. The experiments include a set of different scenarios in terms of both spatial setup and traffic between the smartphone and Wi-Fi access points (AP). The results show that Wisture achieves an online recognition accuracy of up to 94% (average 78%) in detecting and classifying three hand gestures. |

Publications

2025 |

|

| SPACE: 3D Spatial Co-operation and Exploration Framework for Robust Mapping and Coverage with Multi-Robot Systems Journal Article IEEE Robotics and Automation Letters, 10 (12), pp. 13074–13081, 2025. |

| Autonomous Navigation of a Quadruped Robot to Approach Floor Eggs and Path Optimization Analysis for Commercial Feasibility Journal Article American Society of Agricultural and Biological Engineers, 41 (6), pp. 733-747, 2025. |

| 2025 IEEE International Symposium on Multi-Robot and Multi-Agent Systems (MRS), 2025. |

| 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025. |

| IEEE ICRA 2025 Workshop on Block by Block Collaborative Strategies for Multi-agent Robotic Construction, 2025. |

2024 |

|

| 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2024), 2024. |

2020 |

|

| Material Mapping in Unknown Environments using Tapping Sound Conference 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2020), 2020. |

2019 |

|

| Wisture: Touch-less Hand Gesture Classification in Unmodified Smartphones Using Wi-Fi Signals Journal Article IEEE Sensors Journal, 19 (1), pp. 257-267, 2019. |