2026 |

|

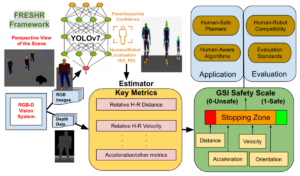

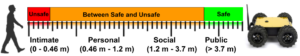

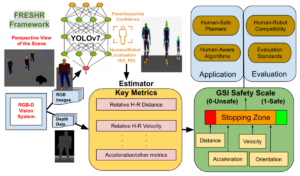

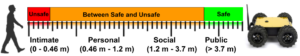

| FRESHR-GSI: A Generalized Safety Model and Evaluation Framework for Mobile Robots in Multi-Human Environments Conference Forthcoming 2026 IEEE International Conference on Robotics & Automation (ICRA), Forthcoming. Abstract | Links | BibTeX | Tags: control, evaluation, human-robot interaction @conference{Pandey2026, title = {FRESHR-GSI: A Generalized Safety Model and Evaluation Framework for Mobile Robots in Multi-Human Environments}, author = {Pranav Pandey and Ramviyas Parasuraman and Prashant Doshi}, url = {https://arxiv.org/abs/2501.03467}, year = {2026}, date = {2026-06-01}, booktitle = {2026 IEEE International Conference on Robotics & Automation (ICRA)}, abstract = {Human safety is critical in applications involving close human-robot interactions (HRI) and is a key aspect of physical compatibility between humans and robots. While measures of human safety in HRI exist, these mainly target industrial settings involving robotic manipulators. Less attention has been paid to settings where mobile robots and humans share the space. This paper introduces a new robot-centered directional framework of human safety. It is particularly useful for evaluating mobile robots as they operate in environments populated by multiple humans. The framework integrates several key metrics, such as each human’s relative distance, speed, and orientation. The core novelty lies in the framework’s flexibility to accommodate different application requirements while allowing for both the robot-centered and external observer points of view. We instantiate the framework by using RGB-D based vision integrated with a deep learning-based human detection pipeline to yield a proxemics-guided generalized safety index (GSI) that instantaneously assesses human safety. We extensively validate GSI’s capability of producing appropriate and fine-grained safety measures in real-world experimental scenarios and demonstrate its superior efficacy against extant safety models.}, keywords = {control, evaluation, human-robot interaction}, pubstate = {forthcoming}, tppubtype = {conference} } Human safety is critical in applications involving close human-robot interactions (HRI) and is a key aspect of physical compatibility between humans and robots. While measures of human safety in HRI exist, these mainly target industrial settings involving robotic manipulators. Less attention has been paid to settings where mobile robots and humans share the space. This paper introduces a new robot-centered directional framework of human safety. It is particularly useful for evaluating mobile robots as they operate in environments populated by multiple humans. The framework integrates several key metrics, such as each human’s relative distance, speed, and orientation. The core novelty lies in the framework’s flexibility to accommodate different application requirements while allowing for both the robot-centered and external observer points of view. We instantiate the framework by using RGB-D based vision integrated with a deep learning-based human detection pipeline to yield a proxemics-guided generalized safety index (GSI) that instantaneously assesses human safety. We extensively validate GSI’s capability of producing appropriate and fine-grained safety measures in real-world experimental scenarios and demonstrate its superior efficacy against extant safety models. |

2025 |

|

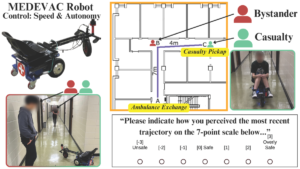

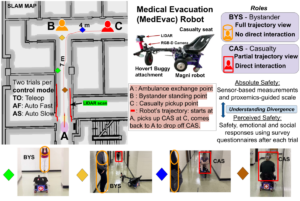

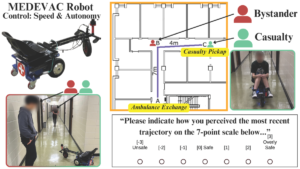

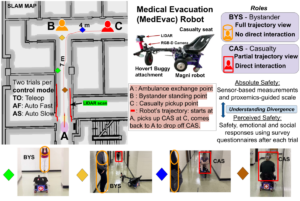

| Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario Conference 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025. Abstract | Links | BibTeX | Tags: autonomy, human-robot interaction, navigation, trust @conference{Goodie2025, title = {Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario}, author = {Tyson Jordan; Pranav Pandey; Prashant Doshi; Ramviyas Parasuraman; Adam Goodie}, url = {https://ieeexplore.ieee.org/document/11246558}, doi = {10.1109/IROS60139.2025.11246558}, year = {2025}, date = {2025-10-19}, booktitle = {2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)}, abstract = {The use of autonomous systems in medical evacuation (MEDEVAC) scenarios is promising, but existing implementations overlook key insights from human-robot interaction (HRI) research. Studies on human-machine teams demonstrate that human perceptions of a machine teammate are critical in governing the machine’s performance. Consequently, it is essential to identify the factors that contribute to positive human perceptions in human-machine teams. Here, we present a mixed factorial design to assess human perceptions of a MEDEVAC robot in a simulated evacuation scenario. Participants were assigned to the role of casualty (CAS) or bystander (BYS) and subjected to three within-subjects conditions based on the MEDEVAC robot’s operating mode: autonomous-slow (AS), autonomous-fast (AF), and teleoperation (TO). During each trial, a MEDEVAC robot navigated an 11-meter path, acquiring a casualty and transporting them to an ambulance exchange point while avoiding an idle bystander. Following each trial, subjects completed a questionnaire measuring their emotional states, perceived safety, and social compatibility with the robot. Results indicate a consistent main effect of operating mode on reported emotional states and perceived safety. Pairwise analyses suggest that the employment of the AF operating mode negatively impacted perceptions along these dimensions. There were no persistent differences between CAS and BYS responses. }, keywords = {autonomy, human-robot interaction, navigation, trust}, pubstate = {published}, tppubtype = {conference} } The use of autonomous systems in medical evacuation (MEDEVAC) scenarios is promising, but existing implementations overlook key insights from human-robot interaction (HRI) research. Studies on human-machine teams demonstrate that human perceptions of a machine teammate are critical in governing the machine’s performance. Consequently, it is essential to identify the factors that contribute to positive human perceptions in human-machine teams. Here, we present a mixed factorial design to assess human perceptions of a MEDEVAC robot in a simulated evacuation scenario. Participants were assigned to the role of casualty (CAS) or bystander (BYS) and subjected to three within-subjects conditions based on the MEDEVAC robot’s operating mode: autonomous-slow (AS), autonomous-fast (AF), and teleoperation (TO). During each trial, a MEDEVAC robot navigated an 11-meter path, acquiring a casualty and transporting them to an ambulance exchange point while avoiding an idle bystander. Following each trial, subjects completed a questionnaire measuring their emotional states, perceived safety, and social compatibility with the robot. Results indicate a consistent main effect of operating mode on reported emotional states and perceived safety. Pairwise analyses suggest that the employment of the AF operating mode negatively impacted perceptions along these dimensions. There were no persistent differences between CAS and BYS responses. |

| Integrating Perceptions: A Human-Centered Physical Safety Model for Human-Robot Interaction Conference 2025 34th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), 2025. Abstract | Links | BibTeX | Tags: cooperation, human-robot interaction, navigation, trust @conference{Pandey2025, title = {Integrating Perceptions: A Human-Centered Physical Safety Model for Human-Robot Interaction}, author = {Pranav Kumar Pandey, Ramviyas Parasuraman, and Prashant Doshi}, url = {https://ieeexplore.ieee.org/document/11217747}, doi = {10.1109/RO-MAN63969.2025.11217747}, year = {2025}, date = {2025-08-25}, booktitle = {2025 34th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN)}, abstract = {Ensuring safety in human-robot interaction (HRI) is essential to foster user trust and enable the broader adoption of robotic systems. Traditional safety models primarily rely on sensor-based measures, such as relative distance and velocity, to assess physical safety. However, these models often fail to capture subjective safety perceptions, which are shaped by individual traits and contextual factors. In this paper, we introduce and analyze a parameterized general safety model that bridges the gap between physical and perceived safety by incorporating a personalization parameter, ρ, into the safety measurement framework to account for individual differences in safety perception. Through a series of hypothesis-driven human-subject studies in a simulated rescue scenario, we investigate how emotional state, trust, and robot behavior influence perceived safety. Our results show that ρ effectively captures meaningful individual differences, driven by affective responses, trust in task consistency, and clustering into distinct user types. Specifically, our findings confirm that predictable and consistent robot behavior as well as the elicitation of positive emotional states, significantly enhance perceived safety. Moreover, responses cluster into a small number of user types, supporting adaptive personalization based on shared safety models. Notably, participant role significantly shapes safety perception, and repeated exposure reduces perceived safety for participants in the casualty role, emphasizing the impact of physical interaction and experiential change. These findings highlight the importance of adaptive, human-centered safety models that integrate both psychological and behavioral dimensions, offering a pathway toward more trustworthy and effective HRI in safety-critical domains. }, keywords = {cooperation, human-robot interaction, navigation, trust}, pubstate = {published}, tppubtype = {conference} } Ensuring safety in human-robot interaction (HRI) is essential to foster user trust and enable the broader adoption of robotic systems. Traditional safety models primarily rely on sensor-based measures, such as relative distance and velocity, to assess physical safety. However, these models often fail to capture subjective safety perceptions, which are shaped by individual traits and contextual factors. In this paper, we introduce and analyze a parameterized general safety model that bridges the gap between physical and perceived safety by incorporating a personalization parameter, ρ, into the safety measurement framework to account for individual differences in safety perception. Through a series of hypothesis-driven human-subject studies in a simulated rescue scenario, we investigate how emotional state, trust, and robot behavior influence perceived safety. Our results show that ρ effectively captures meaningful individual differences, driven by affective responses, trust in task consistency, and clustering into distinct user types. Specifically, our findings confirm that predictable and consistent robot behavior as well as the elicitation of positive emotional states, significantly enhance perceived safety. Moreover, responses cluster into a small number of user types, supporting adaptive personalization based on shared safety models. Notably, participant role significantly shapes safety perception, and repeated exposure reduces perceived safety for participants in the casualty role, emphasizing the impact of physical interaction and experiential change. These findings highlight the importance of adaptive, human-centered safety models that integrate both psychological and behavioral dimensions, offering a pathway toward more trustworthy and effective HRI in safety-critical domains. |

| GSI- A Proxemics-Guided Generalized Safety Metric For Evaluating Safety in Social Navigation Context Workshop IEEE ICRA 2025 Workshop on Advances in Social Navigation: Planning, HRI and Beyond, 2025, (Received Best Poster Award.). Links | BibTeX | Tags: human-robot interaction, navigation, trust @workshop{Pandey2025b, title = {GSI- A Proxemics-Guided Generalized Safety Metric For Evaluating Safety in Social Navigation Context}, author = {Pranav Pandey, Ramviyas Parasuraman, and Prashant Doshi.}, url = {https://socialnav2025.pages.dev/papers/GSI-%20A%20Proxemics-Guided%20Generalized%20Safety%20Metric%20For%20Evaluating%20Safety%20in%20Social%20Navigation%20Context.pdf}, year = {2025}, date = {2025-05-19}, booktitle = {IEEE ICRA 2025 Workshop on Advances in Social Navigation: Planning, HRI and Beyond}, note = {Received Best Poster Award.}, keywords = {human-robot interaction, navigation, trust}, pubstate = {published}, tppubtype = {workshop} } |

2024 |

|

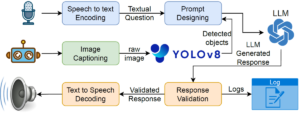

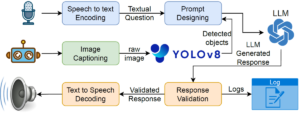

| PhysicsAssistant: An LLM-Powered Interactive Learning Robot for Physics Lab Investigations Conference The 33rd IEEE International Conference on Robot and Human Interactive Communication, IEEE RO-MAN 2024, 2024. Abstract | Links | BibTeX | Tags: assistive devices, human-robot interaction, human-robot interface @conference{Latif2024bb, title = {PhysicsAssistant: An LLM-Powered Interactive Learning Robot for Physics Lab Investigations}, author = {Ehsan Latif and Ramviyas Parasuraman and Xiaoming Zhai}, doi = {10.1109/RO-MAN60168.2024.10731312}, year = {2024}, date = {2024-08-30}, booktitle = {The 33rd IEEE International Conference on Robot and Human Interactive Communication, IEEE RO-MAN 2024}, abstract = { Robot systems in education can leverage Large language models' (LLMs) natural language understanding capabilities to provide assistance and facilitate learning. This paper proposes a multimodal interactive robot (PhysicsAssistant) built on YOLOv8 object detection, cameras, speech recognition, and chatbot using LLM to provide assistance to students' physics labs. We conduct a user study on ten 8th-grade students to empirically evaluate the performance of PhysicsAssistant with a human expert. The Expert rates the assistants' responses to student queries on a 0-4 scale based on Bloom's taxonomy to provide educational support. We have compared the performance of PhysicsAssistant (YOLOv8+GPT-3.5-turbo) with GPT-4 and found that the human expert rating of both systems for factual understanding is same. However, the rating of GPT-4 for conceptual and procedural knowledge (3 and 3.2 vs 2.2 and 2.6, respectively) is significantly higher than PhysicsAssistant (p $<$ 0.05). However, the response time of GPT-4 is significantly higher than PhysicsAssistant (3.54 vs 1.64 sec, p $<$ 0.05). Hence, despite the relatively lower response quality of PhysicsAssistant than GPT-4, it has shown potential for being used as a real-time lab assistant to provide timely responses and can offload teachers' labor to assist with repetitive tasks. To the best of our knowledge, this is the first attempt to build such an interactive multimodal robotic assistant for K-12 science (physics) education. }, keywords = {assistive devices, human-robot interaction, human-robot interface}, pubstate = {published}, tppubtype = {conference} } Robot systems in education can leverage Large language models' (LLMs) natural language understanding capabilities to provide assistance and facilitate learning. This paper proposes a multimodal interactive robot (PhysicsAssistant) built on YOLOv8 object detection, cameras, speech recognition, and chatbot using LLM to provide assistance to students' physics labs. We conduct a user study on ten 8th-grade students to empirically evaluate the performance of PhysicsAssistant with a human expert. The Expert rates the assistants' responses to student queries on a 0-4 scale based on Bloom's taxonomy to provide educational support. We have compared the performance of PhysicsAssistant (YOLOv8+GPT-3.5-turbo) with GPT-4 and found that the human expert rating of both systems for factual understanding is same. However, the rating of GPT-4 for conceptual and procedural knowledge (3 and 3.2 vs 2.2 and 2.6, respectively) is significantly higher than PhysicsAssistant (p $<$ 0.05). However, the response time of GPT-4 is significantly higher than PhysicsAssistant (3.54 vs 1.64 sec, p $<$ 0.05). Hence, despite the relatively lower response quality of PhysicsAssistant than GPT-4, it has shown potential for being used as a real-time lab assistant to provide timely responses and can offload teachers' labor to assist with repetitive tasks. To the best of our knowledge, this is the first attempt to build such an interactive multimodal robotic assistant for K-12 science (physics) education. |

| PhysicsAssistant: An LLM-Powered Interactive Learning Robot for Physics Lab Investigations Workshop IEEE ICRA 2024 Workshop on Accelerating Discovery in Natural Science Laboratories with AI and Robotics, 2024, (Selected for the Pioneer Award). Abstract | Links | BibTeX | Tags: assistive devices, autonomy, human-robot interaction, human-robot interface, learning @workshop{Latif2024d, title = {PhysicsAssistant: An LLM-Powered Interactive Learning Robot for Physics Lab Investigations}, author = {Ehsan Latif, Ramviyas Parasuraman, and Xiaoming Zhai}, url = {https://sites.google.com/view/icra24-accelerating-discovery}, year = {2024}, date = {2024-05-13}, booktitle = {IEEE ICRA 2024 Workshop on Accelerating Discovery in Natural Science Laboratories with AI and Robotics}, abstract = {Robot systems in education can leverage Large language models' (LLMs) natural language understanding capabilities to provide assistance and facilitate learning. This paper proposes a multimodal interactive robot (PhysicsAssistant) built on YOLOv8 object detection, cameras, speech recognition, and chatbot using LLM to provide assistance to students' physics labs. We conduct a user study on ten 8th-grade students to empirically evaluate the performance of PhysicsAssistant with a human expert. The Expert rates the assistants' responses to student queries on a 0-4 scale based on Bloom's taxonomy to provide educational support. We have compared the performance of PhysicsAssistant (YOLOv8+GPT-3.5-turbo) with GPT-4 and found that the human expert rating of both systems for factual understanding is same. However, the rating of GPT-4 for conceptual and procedural knowledge (3 and 3.2 vs 2.2 and 2.6, respectively) is significantly higher than PhysicsAssistant (p $<$ 0.05). However, the response time of GPT-4 is significantly higher than PhysicsAssistant (3.54 vs 1.64 sec, p $<$ 0.05). Hence, despite the relatively lower response quality of PhysicsAssistant than GPT-4, it has shown potential for being used as a real-time lab assistant to provide timely responses and can offload teachers' labor to assist with repetitive tasks. To the best of our knowledge, this is the first attempt to build such an interactive multimodal robotic assistant for K-12 science (physics) education. }, note = {Selected for the Pioneer Award}, keywords = {assistive devices, autonomy, human-robot interaction, human-robot interface, learning}, pubstate = {published}, tppubtype = {workshop} } Robot systems in education can leverage Large language models' (LLMs) natural language understanding capabilities to provide assistance and facilitate learning. This paper proposes a multimodal interactive robot (PhysicsAssistant) built on YOLOv8 object detection, cameras, speech recognition, and chatbot using LLM to provide assistance to students' physics labs. We conduct a user study on ten 8th-grade students to empirically evaluate the performance of PhysicsAssistant with a human expert. The Expert rates the assistants' responses to student queries on a 0-4 scale based on Bloom's taxonomy to provide educational support. We have compared the performance of PhysicsAssistant (YOLOv8+GPT-3.5-turbo) with GPT-4 and found that the human expert rating of both systems for factual understanding is same. However, the rating of GPT-4 for conceptual and procedural knowledge (3 and 3.2 vs 2.2 and 2.6, respectively) is significantly higher than PhysicsAssistant (p $<$ 0.05). However, the response time of GPT-4 is significantly higher than PhysicsAssistant (3.54 vs 1.64 sec, p $<$ 0.05). Hence, despite the relatively lower response quality of PhysicsAssistant than GPT-4, it has shown potential for being used as a real-time lab assistant to provide timely responses and can offload teachers' labor to assist with repetitive tasks. To the best of our knowledge, this is the first attempt to build such an interactive multimodal robotic assistant for K-12 science (physics) education. |

2022 |

|

| On Physical Compatibility of Robots in Human-Robot Collaboration Settings Workshop ICRA 2022 WORKSHOP ON COLLABORATIVE ROBOTS AND THE WORK OF THE FUTURE, 2022. Abstract | Links | BibTeX | Tags: human-robot interaction @workshop{Pandey2022b, title = {On Physical Compatibility of Robots in Human-Robot Collaboration Settings}, author = {Pranav Pandey, Ramviyas Parasuraman, and Prashant Doshi}, url = {https://sites.google.com/view/icra22ws-cor-wotf/accepted-papers}, year = {2022}, date = {2022-05-23}, booktitle = {ICRA 2022 WORKSHOP ON COLLABORATIVE ROBOTS AND THE WORK OF THE FUTURE}, abstract = {Human-Robot Interaction (HRI) is a multidisciplinary field. It has become essential for robots to work with humans in collaboration and teamwork settings, such as collaborative assembly, where they share tasks in an overlapping workspace. While extensive research is available to ensure successful HRI, primarily focusing on the safety factors, our objective is to provide a comprehensive perspective on robot’s compatibility with humans in such settings. Specifically, we highlight the key pillars and elements of Physical Human-Robot Interaction (pHRI) and discuss the valuable metrics for evaluating such systems. To achieve compatibility, we propose that the robot ensure humans’ safety, flexibility in tasks, and robustness to changes in the environment. Ultimately, these elements will help assess robots’ awareness of humans and surroundings and help increase the trustworthiness of robots among human collaborators.}, keywords = {human-robot interaction}, pubstate = {published}, tppubtype = {workshop} } Human-Robot Interaction (HRI) is a multidisciplinary field. It has become essential for robots to work with humans in collaboration and teamwork settings, such as collaborative assembly, where they share tasks in an overlapping workspace. While extensive research is available to ensure successful HRI, primarily focusing on the safety factors, our objective is to provide a comprehensive perspective on robot’s compatibility with humans in such settings. Specifically, we highlight the key pillars and elements of Physical Human-Robot Interaction (pHRI) and discuss the valuable metrics for evaluating such systems. To achieve compatibility, we propose that the robot ensure humans’ safety, flexibility in tasks, and robustness to changes in the environment. Ultimately, these elements will help assess robots’ awareness of humans and surroundings and help increase the trustworthiness of robots among human collaborators. |

2020 |

|

| Needs-driven Heterogeneous Multi-Robot Cooperation in Rescue Missions Conference 2020 IEEE International Symposium on Safety, Security, and Rescue Robotics (SSRR 2020), 2020. Abstract | Links | BibTeX | Tags: human-robot interaction, multi-robot-systems, robotics @conference{Yang2020b, title = {Needs-driven Heterogeneous Multi-Robot Cooperation in Rescue Missions}, author = {Qin Yang and Ramviyas Parasuraman}, url = {https://arxiv.org/abs/2009.00288}, year = {2020}, date = {2020-11-06}, booktitle = {2020 IEEE International Symposium on Safety, Security, and Rescue Robotics (SSRR 2020)}, abstract = {This paper focuses on the teaming aspects and the role of heterogeneity in a multi-robot system applied to robot-aided urban search and rescue (USAR) missions. We specifically propose a needs-driven multi-robot cooperation mechanism represented through a Behavior Tree structure and evaluate the performance of the system in terms of the group utility and energy cost to achieve the rescue mission in a limited time. From the theoretical analysis, we prove that the needs-drive cooperation in a heterogeneous robot system enables higher group utility compared to a homogeneous robot system. We also perform simulation experiments to verify the proposed needs-driven cooperation and show that the heterogeneous multi-robot cooperation can achieve better performance and increase system robustness by reducing uncertainty in task execution. Finally, we discuss the application to human-robot teaming.}, keywords = {human-robot interaction, multi-robot-systems, robotics}, pubstate = {published}, tppubtype = {conference} } This paper focuses on the teaming aspects and the role of heterogeneity in a multi-robot system applied to robot-aided urban search and rescue (USAR) missions. We specifically propose a needs-driven multi-robot cooperation mechanism represented through a Behavior Tree structure and evaluate the performance of the system in terms of the group utility and energy cost to achieve the rescue mission in a limited time. From the theoretical analysis, we prove that the needs-drive cooperation in a heterogeneous robot system enables higher group utility compared to a homogeneous robot system. We also perform simulation experiments to verify the proposed needs-driven cooperation and show that the heterogeneous multi-robot cooperation can achieve better performance and increase system robustness by reducing uncertainty in task execution. Finally, we discuss the application to human-robot teaming. |

| Robot Controlling Robots - A New Perspective to Bilateral Teleoperation in Mobile Robots Workshop RSS 2020 Workshop on Reacting to Contact: Enabling Transparent Interactions through Intelligent Sensing and Actuation, 2020. Abstract | Links | BibTeX | Tags: control, human-robot interaction, networking, robotics @workshop{Tahir2020, title = {Robot Controlling Robots - A New Perspective to Bilateral Teleoperation in Mobile Robots}, author = {Nazish Tahir and Ramviyas Parasuraman}, url = {https://ankitbhatia.github.io/reacting_contact_workshop/}, year = {2020}, date = {2020-07-12}, booktitle = {RSS 2020 Workshop on Reacting to Contact: Enabling Transparent Interactions through Intelligent Sensing and Actuation}, abstract = {Adaptation to increasing levels of autonomy - from manual teleoperation to complete automation is of particular interest to Field Robotics and Human-Robot Interaction community. Towards that line of research, we introduce and investigate a novel bilaterally teleoperation control strategy for a robot to the robot system. A bilateral teleoperation scheme is typically applied to human control of robots. In this abstract, we look at a different perspective of using a bilateral teleoperation system between robots, where one robot (Labor) is teleoperated by an autonomous robot (Master). To realize such a strategy, our proposed robot-system is divided into a master-labor networked scheme where the master robot is located at a remote site operable by a human user or an autonomous agent and a labor robot; the follower robot is located on operation site. The labor robot is capable of reflecting the odometry commands of the master robot meanwhile also navigating its environment by obstacle detection and avoidance mechanism. An autonomous algorithm such as a typical SLAM-based path planner is controlling the master robot, which is provided with a suitable force feedback informative of the labor response by its interaction with the environment. We perform preliminary experiments to verify the system feasibility and analyze the motion transparency in different scenarios. The results show promise to investigate this research further and develop this work towards human multi-robot teleoperation.}, keywords = {control, human-robot interaction, networking, robotics}, pubstate = {published}, tppubtype = {workshop} } Adaptation to increasing levels of autonomy - from manual teleoperation to complete automation is of particular interest to Field Robotics and Human-Robot Interaction community. Towards that line of research, we introduce and investigate a novel bilaterally teleoperation control strategy for a robot to the robot system. A bilateral teleoperation scheme is typically applied to human control of robots. In this abstract, we look at a different perspective of using a bilateral teleoperation system between robots, where one robot (Labor) is teleoperated by an autonomous robot (Master). To realize such a strategy, our proposed robot-system is divided into a master-labor networked scheme where the master robot is located at a remote site operable by a human user or an autonomous agent and a labor robot; the follower robot is located on operation site. The labor robot is capable of reflecting the odometry commands of the master robot meanwhile also navigating its environment by obstacle detection and avoidance mechanism. An autonomous algorithm such as a typical SLAM-based path planner is controlling the master robot, which is provided with a suitable force feedback informative of the labor response by its interaction with the environment. We perform preliminary experiments to verify the system feasibility and analyze the motion transparency in different scenarios. The results show promise to investigate this research further and develop this work towards human multi-robot teleoperation. |

Publications

2026 |

|

| FRESHR-GSI: A Generalized Safety Model and Evaluation Framework for Mobile Robots in Multi-Human Environments Conference Forthcoming 2026 IEEE International Conference on Robotics & Automation (ICRA), Forthcoming. |

2025 |

|

| Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario Conference 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025. |

| Integrating Perceptions: A Human-Centered Physical Safety Model for Human-Robot Interaction Conference 2025 34th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), 2025. |

| GSI- A Proxemics-Guided Generalized Safety Metric For Evaluating Safety in Social Navigation Context Workshop IEEE ICRA 2025 Workshop on Advances in Social Navigation: Planning, HRI and Beyond, 2025, (Received Best Poster Award.). |

2024 |

|

| PhysicsAssistant: An LLM-Powered Interactive Learning Robot for Physics Lab Investigations Conference The 33rd IEEE International Conference on Robot and Human Interactive Communication, IEEE RO-MAN 2024, 2024. |

| PhysicsAssistant: An LLM-Powered Interactive Learning Robot for Physics Lab Investigations Workshop IEEE ICRA 2024 Workshop on Accelerating Discovery in Natural Science Laboratories with AI and Robotics, 2024, (Selected for the Pioneer Award). |

2022 |

|

| On Physical Compatibility of Robots in Human-Robot Collaboration Settings Workshop ICRA 2022 WORKSHOP ON COLLABORATIVE ROBOTS AND THE WORK OF THE FUTURE, 2022. |

2020 |

|

| Needs-driven Heterogeneous Multi-Robot Cooperation in Rescue Missions Conference 2020 IEEE International Symposium on Safety, Security, and Rescue Robotics (SSRR 2020), 2020. |

| Robot Controlling Robots - A New Perspective to Bilateral Teleoperation in Mobile Robots Workshop RSS 2020 Workshop on Reacting to Contact: Enabling Transparent Interactions through Intelligent Sensing and Actuation, 2020. |