2025 |

|

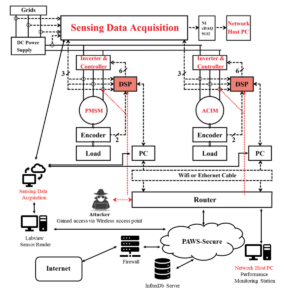

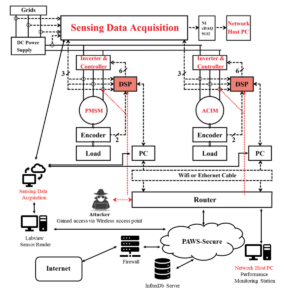

| Real-World Cyber Security Demonstration for Networked Electric Drives Journal Article IEEE Journal of Emerging and Selected Topics in Power Electronics, 13 (4), 2025. Abstract | Links | BibTeX | Tags: control, networking, trust @article{Yang2025, title = {Real-World Cyber Security Demonstration for Networked Electric Drives}, author = {He Yang and Bowen Yang and Stephen Coshatt and Qi Li and Kun Hu and Bryan Cooper Hammond and Jin Ye and Ramviyas Parasuraman and Wenzhan Song}, url = {https://ieeexplore.ieee.org/document/10924153}, year = {2025}, date = {2025-08-01}, journal = {IEEE Journal of Emerging and Selected Topics in Power Electronics}, volume = {13}, number = {4}, abstract = {In this article, we present the design and implementation of a cyber-physical security testbed for networked electric drive systems, aimed at conducting real-world security demonstrations. To our knowledge, this is one of the first security testbeds for networked electric drives, seamlessly integrating the domains of power electronics and computer science, and cybersecurity. By doing so, the testbed offers a comprehensive platform to explore and understand the intricate and often complex interactions between cyber and physical systems. The core of our testbed consists of four electric machine drives, meticulously configured to emulate small-scale but realistic information technology (IT) and operational technology (OT) networks. This setup both provides a controlled environment for simulating a wide array of cyber-attacks, and mirrors potential real-world attack scenarios with a high degree of fidelity. The testbed serves as an invaluable resource for the study of cyber-physical security, offering a practical and dynamic platform for testing and validating cybersecurity measures in the context of networked electric drive systems. As a concrete example of the testbed’s capabilities, we have developed and implemented a Python-based script designed to execute step-stone attacks over a wireless local area network (WLAN). This script leverages a sequence of target IP addresses, simulating a real-world attack vector that could be exploited by adversaries. To counteract such threats, we demonstrate the efficacy of our developed cyber-attack detection algorithms, which are integral to our testbed’s security framework. Furthermore, the testbed incorporates a real-time visualization system using InfluxDB and Grafana, providing a dynamic and interactive representation of networked electric drives and their associated security monitoring mechanisms. This visualization component not only enhances the testbed’s usability but also offers insightful, real-time data for researchers and practitioners, thereby facilitating a deeper understanding of cyber-physical security dynamics in networked electric drive systems.}, keywords = {control, networking, trust}, pubstate = {published}, tppubtype = {article} } In this article, we present the design and implementation of a cyber-physical security testbed for networked electric drive systems, aimed at conducting real-world security demonstrations. To our knowledge, this is one of the first security testbeds for networked electric drives, seamlessly integrating the domains of power electronics and computer science, and cybersecurity. By doing so, the testbed offers a comprehensive platform to explore and understand the intricate and often complex interactions between cyber and physical systems. The core of our testbed consists of four electric machine drives, meticulously configured to emulate small-scale but realistic information technology (IT) and operational technology (OT) networks. This setup both provides a controlled environment for simulating a wide array of cyber-attacks, and mirrors potential real-world attack scenarios with a high degree of fidelity. The testbed serves as an invaluable resource for the study of cyber-physical security, offering a practical and dynamic platform for testing and validating cybersecurity measures in the context of networked electric drive systems. As a concrete example of the testbed’s capabilities, we have developed and implemented a Python-based script designed to execute step-stone attacks over a wireless local area network (WLAN). This script leverages a sequence of target IP addresses, simulating a real-world attack vector that could be exploited by adversaries. To counteract such threats, we demonstrate the efficacy of our developed cyber-attack detection algorithms, which are integral to our testbed’s security framework. Furthermore, the testbed incorporates a real-time visualization system using InfluxDB and Grafana, providing a dynamic and interactive representation of networked electric drives and their associated security monitoring mechanisms. This visualization component not only enhances the testbed’s usability but also offers insightful, real-time data for researchers and practitioners, thereby facilitating a deeper understanding of cyber-physical security dynamics in networked electric drive systems. |

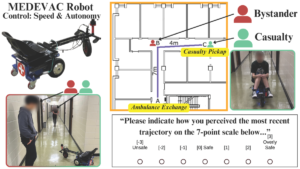

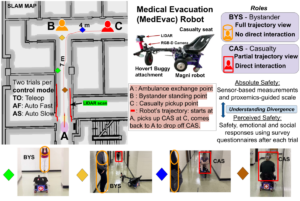

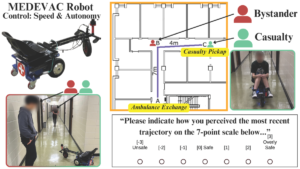

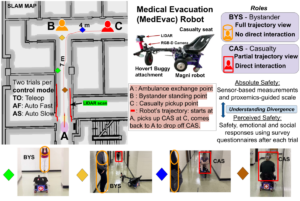

| Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario Conference 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025. Abstract | Links | BibTeX | Tags: autonomy, human-robot interaction, navigation, trust @conference{Goodie2025, title = {Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario}, author = {Tyson Jordan; Pranav Pandey; Prashant Doshi; Ramviyas Parasuraman; Adam Goodie}, url = {https://ieeexplore.ieee.org/document/11246558}, doi = {10.1109/IROS60139.2025.11246558}, year = {2025}, date = {2025-10-19}, booktitle = {2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)}, abstract = {The use of autonomous systems in medical evacuation (MEDEVAC) scenarios is promising, but existing implementations overlook key insights from human-robot interaction (HRI) research. Studies on human-machine teams demonstrate that human perceptions of a machine teammate are critical in governing the machine’s performance. Consequently, it is essential to identify the factors that contribute to positive human perceptions in human-machine teams. Here, we present a mixed factorial design to assess human perceptions of a MEDEVAC robot in a simulated evacuation scenario. Participants were assigned to the role of casualty (CAS) or bystander (BYS) and subjected to three within-subjects conditions based on the MEDEVAC robot’s operating mode: autonomous-slow (AS), autonomous-fast (AF), and teleoperation (TO). During each trial, a MEDEVAC robot navigated an 11-meter path, acquiring a casualty and transporting them to an ambulance exchange point while avoiding an idle bystander. Following each trial, subjects completed a questionnaire measuring their emotional states, perceived safety, and social compatibility with the robot. Results indicate a consistent main effect of operating mode on reported emotional states and perceived safety. Pairwise analyses suggest that the employment of the AF operating mode negatively impacted perceptions along these dimensions. There were no persistent differences between CAS and BYS responses. }, keywords = {autonomy, human-robot interaction, navigation, trust}, pubstate = {published}, tppubtype = {conference} } The use of autonomous systems in medical evacuation (MEDEVAC) scenarios is promising, but existing implementations overlook key insights from human-robot interaction (HRI) research. Studies on human-machine teams demonstrate that human perceptions of a machine teammate are critical in governing the machine’s performance. Consequently, it is essential to identify the factors that contribute to positive human perceptions in human-machine teams. Here, we present a mixed factorial design to assess human perceptions of a MEDEVAC robot in a simulated evacuation scenario. Participants were assigned to the role of casualty (CAS) or bystander (BYS) and subjected to three within-subjects conditions based on the MEDEVAC robot’s operating mode: autonomous-slow (AS), autonomous-fast (AF), and teleoperation (TO). During each trial, a MEDEVAC robot navigated an 11-meter path, acquiring a casualty and transporting them to an ambulance exchange point while avoiding an idle bystander. Following each trial, subjects completed a questionnaire measuring their emotional states, perceived safety, and social compatibility with the robot. Results indicate a consistent main effect of operating mode on reported emotional states and perceived safety. Pairwise analyses suggest that the employment of the AF operating mode negatively impacted perceptions along these dimensions. There were no persistent differences between CAS and BYS responses. |

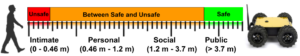

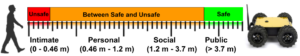

| Integrating Perceptions: A Human-Centered Physical Safety Model for Human-Robot Interaction Conference 2025 34th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), 2025. Abstract | Links | BibTeX | Tags: cooperation, human-robot interaction, navigation, trust @conference{Pandey2025, title = {Integrating Perceptions: A Human-Centered Physical Safety Model for Human-Robot Interaction}, author = {Pranav Kumar Pandey, Ramviyas Parasuraman, and Prashant Doshi}, url = {https://ieeexplore.ieee.org/document/11217747}, doi = {10.1109/RO-MAN63969.2025.11217747}, year = {2025}, date = {2025-08-25}, booktitle = {2025 34th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN)}, abstract = {Ensuring safety in human-robot interaction (HRI) is essential to foster user trust and enable the broader adoption of robotic systems. Traditional safety models primarily rely on sensor-based measures, such as relative distance and velocity, to assess physical safety. However, these models often fail to capture subjective safety perceptions, which are shaped by individual traits and contextual factors. In this paper, we introduce and analyze a parameterized general safety model that bridges the gap between physical and perceived safety by incorporating a personalization parameter, ρ, into the safety measurement framework to account for individual differences in safety perception. Through a series of hypothesis-driven human-subject studies in a simulated rescue scenario, we investigate how emotional state, trust, and robot behavior influence perceived safety. Our results show that ρ effectively captures meaningful individual differences, driven by affective responses, trust in task consistency, and clustering into distinct user types. Specifically, our findings confirm that predictable and consistent robot behavior as well as the elicitation of positive emotional states, significantly enhance perceived safety. Moreover, responses cluster into a small number of user types, supporting adaptive personalization based on shared safety models. Notably, participant role significantly shapes safety perception, and repeated exposure reduces perceived safety for participants in the casualty role, emphasizing the impact of physical interaction and experiential change. These findings highlight the importance of adaptive, human-centered safety models that integrate both psychological and behavioral dimensions, offering a pathway toward more trustworthy and effective HRI in safety-critical domains. }, keywords = {cooperation, human-robot interaction, navigation, trust}, pubstate = {published}, tppubtype = {conference} } Ensuring safety in human-robot interaction (HRI) is essential to foster user trust and enable the broader adoption of robotic systems. Traditional safety models primarily rely on sensor-based measures, such as relative distance and velocity, to assess physical safety. However, these models often fail to capture subjective safety perceptions, which are shaped by individual traits and contextual factors. In this paper, we introduce and analyze a parameterized general safety model that bridges the gap between physical and perceived safety by incorporating a personalization parameter, ρ, into the safety measurement framework to account for individual differences in safety perception. Through a series of hypothesis-driven human-subject studies in a simulated rescue scenario, we investigate how emotional state, trust, and robot behavior influence perceived safety. Our results show that ρ effectively captures meaningful individual differences, driven by affective responses, trust in task consistency, and clustering into distinct user types. Specifically, our findings confirm that predictable and consistent robot behavior as well as the elicitation of positive emotional states, significantly enhance perceived safety. Moreover, responses cluster into a small number of user types, supporting adaptive personalization based on shared safety models. Notably, participant role significantly shapes safety perception, and repeated exposure reduces perceived safety for participants in the casualty role, emphasizing the impact of physical interaction and experiential change. These findings highlight the importance of adaptive, human-centered safety models that integrate both psychological and behavioral dimensions, offering a pathway toward more trustworthy and effective HRI in safety-critical domains. |

| GSI- A Proxemics-Guided Generalized Safety Metric For Evaluating Safety in Social Navigation Context Workshop IEEE ICRA 2025 Workshop on Advances in Social Navigation: Planning, HRI and Beyond, 2025, (Received Best Poster Award.). Links | BibTeX | Tags: human-robot interaction, navigation, trust @workshop{Pandey2025b, title = {GSI- A Proxemics-Guided Generalized Safety Metric For Evaluating Safety in Social Navigation Context}, author = {Pranav Pandey, Ramviyas Parasuraman, and Prashant Doshi.}, url = {https://socialnav2025.pages.dev/papers/GSI-%20A%20Proxemics-Guided%20Generalized%20Safety%20Metric%20For%20Evaluating%20Safety%20in%20Social%20Navigation%20Context.pdf}, year = {2025}, date = {2025-05-19}, booktitle = {IEEE ICRA 2025 Workshop on Advances in Social Navigation: Planning, HRI and Beyond}, note = {Received Best Poster Award.}, keywords = {human-robot interaction, navigation, trust}, pubstate = {published}, tppubtype = {workshop} } |

2022 |

|

| Sharing Autonomy of Exploration and Exploitation via Control Interface Workshop ICRA 2022 Workshop on Shared Autonomy in Physical Human-Robot Interaction: Adaptability and Trust, 2022. Abstract | Links | BibTeX | Tags: autonomy, human-robot interface, trust @workshop{Munir2022, title = {Sharing Autonomy of Exploration and Exploitation via Control Interface}, author = {Aiman Munir and Ramviyas Parasuraman}, url = {https://sites.google.com/view/saphri-icra2022/contributions}, year = {2022}, date = {2022-05-23}, booktitle = {ICRA 2022 Workshop on Shared Autonomy in Physical Human-Robot Interaction: Adaptability and Trust}, abstract = {Shared autonomy is a control paradigm that refers to the adaptation of a robot’s autonomy level in dynamic environments while taking human intentions and status into account at the same time. Here, the autonomy level can be changed based on internal/external information and human input. However, there are no clear guidelines and studies that help understand ”when” should a robot adapt its autonomy level to different functionalities. Therefore, in this paper, we create a framework that helps to improve the human-robot control interface by allowing humans to adapt to the robots’ autonomy level as well as to create a study design to gather insights into human’s preference to switch autonomy levels based on the current situation. We create two high-level strategies - Exploration to gather more data and Exploitation to make use of current data - for a search and rescue task. These two strategies can be achieved with human inputs or autonomous algorithms. We intend to understand the human preferences to the autonomy levels (and ”when” they want to switch) to these two strategies. The analysis is expected to provide insights into designing shared autonomy schemes and algorithms to consider human preferences in adaptively using autonomy levels of certain high-level strategies.}, keywords = {autonomy, human-robot interface, trust}, pubstate = {published}, tppubtype = {workshop} } Shared autonomy is a control paradigm that refers to the adaptation of a robot’s autonomy level in dynamic environments while taking human intentions and status into account at the same time. Here, the autonomy level can be changed based on internal/external information and human input. However, there are no clear guidelines and studies that help understand ”when” should a robot adapt its autonomy level to different functionalities. Therefore, in this paper, we create a framework that helps to improve the human-robot control interface by allowing humans to adapt to the robots’ autonomy level as well as to create a study design to gather insights into human’s preference to switch autonomy levels based on the current situation. We create two high-level strategies - Exploration to gather more data and Exploitation to make use of current data - for a search and rescue task. These two strategies can be achieved with human inputs or autonomous algorithms. We intend to understand the human preferences to the autonomy levels (and ”when” they want to switch) to these two strategies. The analysis is expected to provide insights into designing shared autonomy schemes and algorithms to consider human preferences in adaptively using autonomy levels of certain high-level strategies. |

2021 |

|

| 2021 IEEE International Conference on Systems, Man, and Cybernetics (SMC), IEEE IEEE, 2021. Abstract | Links | BibTeX | Tags: evaluation, multi-robot, planning, trust @conference{Yang2021, title = {How Can Robots Trust Each Other For Better Cooperation? A Relative Needs Entropy Based Robot-Robot Trust Assessment Model}, author = {Qin Yang and Ramviyas Parasuraman}, doi = {10.1109/SMC52423.2021.9659187}, year = {2021}, date = {2021-10-20}, booktitle = {2021 IEEE International Conference on Systems, Man, and Cybernetics (SMC)}, pages = {2656--2663}, publisher = {IEEE}, organization = {IEEE}, abstract = {Cooperation in multi-agent and multi-robot systems can help agents build various formations, shapes, and patterns presenting corresponding functions and purposes adapting to different situations. Relationships between agents such as their spatial proximity and functional similarities could play a crucial role in cooperation between agents. Trust level between agents is an essential factor in evaluating their relationships' reliability and stability, much as people do. This paper proposes a new model called Relative Needs Entropy (RNE) to assess trust between robotic agents. RNE measures the distance of needs distribution between individual agents or groups of agents. To exemplify its utility, we implement and demonstrate our trust model through experiments simulating a heterogeneous multi-robot grouping task in a persistent urban search and rescue mission consisting of tasks at two levels of difficulty. The results suggest that RNE trust-Based grouping of robots can achieve better performance and adaptability for diverse task execution compared to the state-of-the-art energy-based or distance-based grouping models.}, keywords = {evaluation, multi-robot, planning, trust}, pubstate = {published}, tppubtype = {conference} } Cooperation in multi-agent and multi-robot systems can help agents build various formations, shapes, and patterns presenting corresponding functions and purposes adapting to different situations. Relationships between agents such as their spatial proximity and functional similarities could play a crucial role in cooperation between agents. Trust level between agents is an essential factor in evaluating their relationships' reliability and stability, much as people do. This paper proposes a new model called Relative Needs Entropy (RNE) to assess trust between robotic agents. RNE measures the distance of needs distribution between individual agents or groups of agents. To exemplify its utility, we implement and demonstrate our trust model through experiments simulating a heterogeneous multi-robot grouping task in a persistent urban search and rescue mission consisting of tasks at two levels of difficulty. The results suggest that RNE trust-Based grouping of robots can achieve better performance and adaptability for diverse task execution compared to the state-of-the-art energy-based or distance-based grouping models. |

Publications

2025 |

|

| Real-World Cyber Security Demonstration for Networked Electric Drives Journal Article IEEE Journal of Emerging and Selected Topics in Power Electronics, 13 (4), 2025. |

| Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario Conference 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025. |

| Integrating Perceptions: A Human-Centered Physical Safety Model for Human-Robot Interaction Conference 2025 34th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), 2025. |

| GSI- A Proxemics-Guided Generalized Safety Metric For Evaluating Safety in Social Navigation Context Workshop IEEE ICRA 2025 Workshop on Advances in Social Navigation: Planning, HRI and Beyond, 2025, (Received Best Poster Award.). |

2022 |

|

| Sharing Autonomy of Exploration and Exploitation via Control Interface Workshop ICRA 2022 Workshop on Shared Autonomy in Physical Human-Robot Interaction: Adaptability and Trust, 2022. |

2021 |

|

| 2021 IEEE International Conference on Systems, Man, and Cybernetics (SMC), IEEE IEEE, 2021. |