2026 |

|

| Imitation-BT: Automating Behavior Tree Generation by Echoing Reinforcement Learning Agents Conference Forthcoming 2026 IEEE International Conference on Robotics & Automation (ICRA), Forthcoming. Abstract | BibTeX | Tags: autonomy, behavior-trees, learning, planning @conference{Bthula2026, title = {Imitation-BT: Automating Behavior Tree Generation by Echoing Reinforcement Learning Agents}, author = {Shailendra Sekhar Bthula and Ramviyas Parasuraman}, year = {2026}, date = {2026-06-01}, booktitle = {2026 IEEE International Conference on Robotics & Automation (ICRA)}, abstract = {Understanding an autonomous agent's decision-making prowess is of paramount importance, as it increases trust and guarantees safety. Although agent policies learned through reinforcement learning (RL) and machine learning (ML) paradigms have demonstrated their dominance in various domains, they struggle with deployment in high-stakes environments due to their algorithmic opacity. A structured and transparent representation of a policy helps us understand, evaluate, and modify it if necessary. Due to their inherent reactivity, modularity, and transparent hierarchical representation, the Behavior Tree (BT) is an ideal solution to represent control policies. In this paper, we focus on building a knowledge representation transfer framework in which knowledge of trained RL agents is captured through imitation learning and then utilized to form a compact BT. Our primary focus is to retain maximum performance while improving the interpretability of the BTs. In combination with planning and learning, we automate the formation of a BT and offer an alternative, transparent architecture for policy representation. In an extensive analysis with a variety of gymnasium environments and the Robotics Package Delivery domain simulations, we demonstrate the significant performance retention capability and superior interpretability of the proposed Imitation-BT. }, keywords = {autonomy, behavior-trees, learning, planning}, pubstate = {forthcoming}, tppubtype = {conference} } Understanding an autonomous agent's decision-making prowess is of paramount importance, as it increases trust and guarantees safety. Although agent policies learned through reinforcement learning (RL) and machine learning (ML) paradigms have demonstrated their dominance in various domains, they struggle with deployment in high-stakes environments due to their algorithmic opacity. A structured and transparent representation of a policy helps us understand, evaluate, and modify it if necessary. Due to their inherent reactivity, modularity, and transparent hierarchical representation, the Behavior Tree (BT) is an ideal solution to represent control policies. In this paper, we focus on building a knowledge representation transfer framework in which knowledge of trained RL agents is captured through imitation learning and then utilized to form a compact BT. Our primary focus is to retain maximum performance while improving the interpretability of the BTs. In combination with planning and learning, we automate the formation of a BT and offer an alternative, transparent architecture for policy representation. In an extensive analysis with a variety of gymnasium environments and the Robotics Package Delivery domain simulations, we demonstrate the significant performance retention capability and superior interpretability of the proposed Imitation-BT. |

2025 |

|

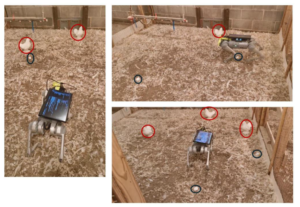

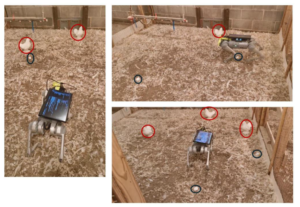

| Autonomous Navigation of a Quadruped Robot to Approach Floor Eggs and Path Optimization Analysis for Commercial Feasibility Journal Article American Society of Agricultural and Biological Engineers, 41 (6), pp. 733-747, 2025. Abstract | Links | BibTeX | Tags: autonomy, control, navigation, perception, planning @article{Mandiga2025, title = {Autonomous Navigation of a Quadruped Robot to Approach Floor Eggs and Path Optimization Analysis for Commercial Feasibility}, author = {Aravind Mandiga, Guoming Li, Tianming Liu, Ramviyas Parasuraman, Ramana M Pidaparti, Venkat UC Bodempudi, and Samuel E Aggrey}, url = {https://elibrary.asabe.org/abstract.asp?AID=55713}, doi = {10.13031/aea.16384}, year = {2025}, date = {2025-01-01}, journal = {American Society of Agricultural and Biological Engineers}, volume = {41}, number = {6}, pages = {733-747}, abstract = { Floor eggs (i.e., eggs laid on the litter floor) are a major problem in cage-free hen systems and account for approximately 5% to 6% of daily egg production. Floor eggs may be contaminated and pecked by birds, which can induce egg eating, degradation of egg quality, and risk of additional floor eggs if not collected in a timely manner. Currently, floor eggs require time-consuming manual collection in daily flock inspection. The objective was to develop autonomous navigation for a quadruped robot to approach floor eggs and to evaluate commercial feasibility through optimized routing strategies. The robot was equipped with an RGB-Depth camera for object detection and depth estimation, and multiple deep learning object detection models were evaluated. Mathematical operations associated with imagery coordinates are converted to real-world trajectories for robot movement controls. The robot was tested at speeds of 0.27, 0.34, 0.41, 0.52, and 0.68 m/s to approach floor eggs. Results show the model successfully localizes floor eggs and hens with over 95% precision, recall, and mAP50(B). The robot approaches floor eggs with an average accuracy of 90%. Commercial feasibility was assessed through mathematical optimization analysis using boustrophedon cellular decomposition for two payload scenarios (50 and 77 eggs) in a typical 50,000-hen facility (380 x 18.2 m). Optimization analysis demonstrated operational viability with total daily travel distances of 10.7 km (50-egg payload) and 7.8 km (77-egg payload) for seven daily charge cycles, successfully transferring 2,000 floor eggs to the conveyor belts. These findings show great potential for quadruped robot navigation and commercial implementation for floor egg collection.}, keywords = {autonomy, control, navigation, perception, planning}, pubstate = {published}, tppubtype = {article} } Floor eggs (i.e., eggs laid on the litter floor) are a major problem in cage-free hen systems and account for approximately 5% to 6% of daily egg production. Floor eggs may be contaminated and pecked by birds, which can induce egg eating, degradation of egg quality, and risk of additional floor eggs if not collected in a timely manner. Currently, floor eggs require time-consuming manual collection in daily flock inspection. The objective was to develop autonomous navigation for a quadruped robot to approach floor eggs and to evaluate commercial feasibility through optimized routing strategies. The robot was equipped with an RGB-Depth camera for object detection and depth estimation, and multiple deep learning object detection models were evaluated. Mathematical operations associated with imagery coordinates are converted to real-world trajectories for robot movement controls. The robot was tested at speeds of 0.27, 0.34, 0.41, 0.52, and 0.68 m/s to approach floor eggs. Results show the model successfully localizes floor eggs and hens with over 95% precision, recall, and mAP50(B). The robot approaches floor eggs with an average accuracy of 90%. Commercial feasibility was assessed through mathematical optimization analysis using boustrophedon cellular decomposition for two payload scenarios (50 and 77 eggs) in a typical 50,000-hen facility (380 x 18.2 m). Optimization analysis demonstrated operational viability with total daily travel distances of 10.7 km (50-egg payload) and 7.8 km (77-egg payload) for seven daily charge cycles, successfully transferring 2,000 floor eggs to the conveyor belts. These findings show great potential for quadruped robot navigation and commercial implementation for floor egg collection. |

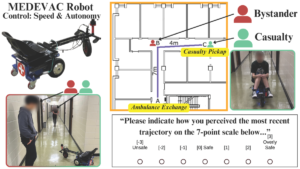

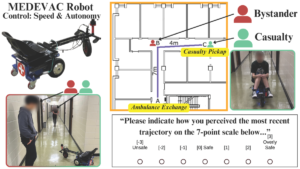

| Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario Conference 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025. Abstract | Links | BibTeX | Tags: autonomy, human-robot interaction, navigation, trust @conference{Goodie2025, title = {Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario}, author = {Tyson Jordan; Pranav Pandey; Prashant Doshi; Ramviyas Parasuraman; Adam Goodie}, url = {https://ieeexplore.ieee.org/document/11246558}, doi = {10.1109/IROS60139.2025.11246558}, year = {2025}, date = {2025-10-19}, booktitle = {2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)}, abstract = {The use of autonomous systems in medical evacuation (MEDEVAC) scenarios is promising, but existing implementations overlook key insights from human-robot interaction (HRI) research. Studies on human-machine teams demonstrate that human perceptions of a machine teammate are critical in governing the machine’s performance. Consequently, it is essential to identify the factors that contribute to positive human perceptions in human-machine teams. Here, we present a mixed factorial design to assess human perceptions of a MEDEVAC robot in a simulated evacuation scenario. Participants were assigned to the role of casualty (CAS) or bystander (BYS) and subjected to three within-subjects conditions based on the MEDEVAC robot’s operating mode: autonomous-slow (AS), autonomous-fast (AF), and teleoperation (TO). During each trial, a MEDEVAC robot navigated an 11-meter path, acquiring a casualty and transporting them to an ambulance exchange point while avoiding an idle bystander. Following each trial, subjects completed a questionnaire measuring their emotional states, perceived safety, and social compatibility with the robot. Results indicate a consistent main effect of operating mode on reported emotional states and perceived safety. Pairwise analyses suggest that the employment of the AF operating mode negatively impacted perceptions along these dimensions. There were no persistent differences between CAS and BYS responses. }, keywords = {autonomy, human-robot interaction, navigation, trust}, pubstate = {published}, tppubtype = {conference} } The use of autonomous systems in medical evacuation (MEDEVAC) scenarios is promising, but existing implementations overlook key insights from human-robot interaction (HRI) research. Studies on human-machine teams demonstrate that human perceptions of a machine teammate are critical in governing the machine’s performance. Consequently, it is essential to identify the factors that contribute to positive human perceptions in human-machine teams. Here, we present a mixed factorial design to assess human perceptions of a MEDEVAC robot in a simulated evacuation scenario. Participants were assigned to the role of casualty (CAS) or bystander (BYS) and subjected to three within-subjects conditions based on the MEDEVAC robot’s operating mode: autonomous-slow (AS), autonomous-fast (AF), and teleoperation (TO). During each trial, a MEDEVAC robot navigated an 11-meter path, acquiring a casualty and transporting them to an ambulance exchange point while avoiding an idle bystander. Following each trial, subjects completed a questionnaire measuring their emotional states, perceived safety, and social compatibility with the robot. Results indicate a consistent main effect of operating mode on reported emotional states and perceived safety. Pairwise analyses suggest that the employment of the AF operating mode negatively impacted perceptions along these dimensions. There were no persistent differences between CAS and BYS responses. |

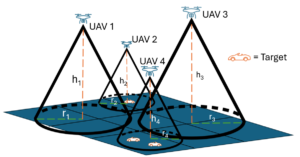

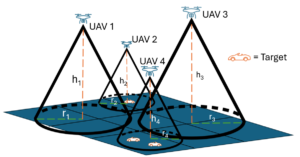

| H-Cov: Multi-UAV Sensor Coverage with Altitude Optimization for Target Tracking Workshop IEEE ICRA 2025 Workshop on 25 YEARS OF AERIAL ROBOTICS: CHALLENGES AND OPPORTUNITIES, 2025. Abstract | Links | BibTeX | Tags: autonomy, control, multi-robot systems, planning @workshop{Nistane2025, title = {H-Cov: Multi-UAV Sensor Coverage with Altitude Optimization for Target Tracking}, author = {Swaraj Nistane, Tohid Tasooji, and Ramviyas Parasuraman}, url = {https://aerial-robotics-workshop-icra.com/wp-content/uploads/2025/05/Poster14.pdf}, year = {2025}, date = {2025-05-19}, booktitle = {IEEE ICRA 2025 Workshop on 25 YEARS OF AERIAL ROBOTICS: CHALLENGES AND OPPORTUNITIES}, abstract = {This paper presents a distributed multi-target coverage control framework for multiple unmanned aerial vehicle (UAV) systems that integrates Voronoi-based coverage control with altitude optimization. The proposed method enables robots to adjust their positions and altitudes dynamically to optimize sensing performance across varying target distributions. The framework effectively balances trade-offs between detection costs and coverage area by determining the optimal position and altitude for each robot. This allows the system to adapt to different environmental conditions and target densities, ensuring optimal performance in various scenarios. In the proposed framework, robots descend to lower altitudes in high-density target regions to improve detection accuracy, while in sparse regions, they ascend to maximize coverage. A minimum altitude constraint is obtained to maintain precise tracking in dense areas, ensuring that robots do not operate at excessively low altitudes. The approach guarantees complete coverage of the target space by guiding robots toward the weighted centroids of their respective Voronoi cells, thereby ensuring efficient task allocation and spatial distribution. Simulation experiments demonstrate the effectiveness of the proposed framework in improving tracking accuracy and coverage efficiency in different environments. The results validate the capability of the framework to handle real-time, multi-target tracking and sensor coverage in complex target distributions.}, keywords = {autonomy, control, multi-robot systems, planning}, pubstate = {published}, tppubtype = {workshop} } This paper presents a distributed multi-target coverage control framework for multiple unmanned aerial vehicle (UAV) systems that integrates Voronoi-based coverage control with altitude optimization. The proposed method enables robots to adjust their positions and altitudes dynamically to optimize sensing performance across varying target distributions. The framework effectively balances trade-offs between detection costs and coverage area by determining the optimal position and altitude for each robot. This allows the system to adapt to different environmental conditions and target densities, ensuring optimal performance in various scenarios. In the proposed framework, robots descend to lower altitudes in high-density target regions to improve detection accuracy, while in sparse regions, they ascend to maximize coverage. A minimum altitude constraint is obtained to maintain precise tracking in dense areas, ensuring that robots do not operate at excessively low altitudes. The approach guarantees complete coverage of the target space by guiding robots toward the weighted centroids of their respective Voronoi cells, thereby ensuring efficient task allocation and spatial distribution. Simulation experiments demonstrate the effectiveness of the proposed framework in improving tracking accuracy and coverage efficiency in different environments. The results validate the capability of the framework to handle real-time, multi-target tracking and sensor coverage in complex target distributions. |

2024 |

|

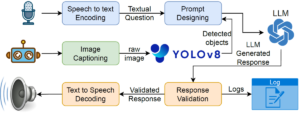

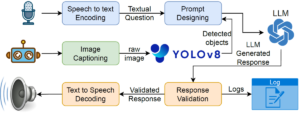

| PhysicsAssistant: An LLM-Powered Interactive Learning Robot for Physics Lab Investigations Workshop IEEE ICRA 2024 Workshop on Accelerating Discovery in Natural Science Laboratories with AI and Robotics, 2024, (Selected for the Pioneer Award). Abstract | Links | BibTeX | Tags: assistive devices, autonomy, human-robot interaction, human-robot interface, learning @workshop{Latif2024d, title = {PhysicsAssistant: An LLM-Powered Interactive Learning Robot for Physics Lab Investigations}, author = {Ehsan Latif, Ramviyas Parasuraman, and Xiaoming Zhai}, url = {https://sites.google.com/view/icra24-accelerating-discovery}, year = {2024}, date = {2024-05-13}, booktitle = {IEEE ICRA 2024 Workshop on Accelerating Discovery in Natural Science Laboratories with AI and Robotics}, abstract = {Robot systems in education can leverage Large language models' (LLMs) natural language understanding capabilities to provide assistance and facilitate learning. This paper proposes a multimodal interactive robot (PhysicsAssistant) built on YOLOv8 object detection, cameras, speech recognition, and chatbot using LLM to provide assistance to students' physics labs. We conduct a user study on ten 8th-grade students to empirically evaluate the performance of PhysicsAssistant with a human expert. The Expert rates the assistants' responses to student queries on a 0-4 scale based on Bloom's taxonomy to provide educational support. We have compared the performance of PhysicsAssistant (YOLOv8+GPT-3.5-turbo) with GPT-4 and found that the human expert rating of both systems for factual understanding is same. However, the rating of GPT-4 for conceptual and procedural knowledge (3 and 3.2 vs 2.2 and 2.6, respectively) is significantly higher than PhysicsAssistant (p $<$ 0.05). However, the response time of GPT-4 is significantly higher than PhysicsAssistant (3.54 vs 1.64 sec, p $<$ 0.05). Hence, despite the relatively lower response quality of PhysicsAssistant than GPT-4, it has shown potential for being used as a real-time lab assistant to provide timely responses and can offload teachers' labor to assist with repetitive tasks. To the best of our knowledge, this is the first attempt to build such an interactive multimodal robotic assistant for K-12 science (physics) education. }, note = {Selected for the Pioneer Award}, keywords = {assistive devices, autonomy, human-robot interaction, human-robot interface, learning}, pubstate = {published}, tppubtype = {workshop} } Robot systems in education can leverage Large language models' (LLMs) natural language understanding capabilities to provide assistance and facilitate learning. This paper proposes a multimodal interactive robot (PhysicsAssistant) built on YOLOv8 object detection, cameras, speech recognition, and chatbot using LLM to provide assistance to students' physics labs. We conduct a user study on ten 8th-grade students to empirically evaluate the performance of PhysicsAssistant with a human expert. The Expert rates the assistants' responses to student queries on a 0-4 scale based on Bloom's taxonomy to provide educational support. We have compared the performance of PhysicsAssistant (YOLOv8+GPT-3.5-turbo) with GPT-4 and found that the human expert rating of both systems for factual understanding is same. However, the rating of GPT-4 for conceptual and procedural knowledge (3 and 3.2 vs 2.2 and 2.6, respectively) is significantly higher than PhysicsAssistant (p $<$ 0.05). However, the response time of GPT-4 is significantly higher than PhysicsAssistant (3.54 vs 1.64 sec, p $<$ 0.05). Hence, despite the relatively lower response quality of PhysicsAssistant than GPT-4, it has shown potential for being used as a real-time lab assistant to provide timely responses and can offload teachers' labor to assist with repetitive tasks. To the best of our knowledge, this is the first attempt to build such an interactive multimodal robotic assistant for K-12 science (physics) education. |

2023 |

|

| KT-BT: A Framework for Knowledge Transfer Through Behavior Trees in Multi-Robot Systems Journal Article IEEE Transactions on Robotics, 30 (5), pp. 4114 - 4130, 2023. Abstract | Links | BibTeX | Tags: autonomy, behavior-trees, heterogeneity, multi-robot, planning @article{Venkata2023b, title = {KT-BT: A Framework for Knowledge Transfer Through Behavior Trees in Multi-Robot Systems}, author = {Sanjay Sarma Oruganti Venkata, Ramviyas Parasuraman, Ramana Pidaparti}, url = {https://ieeexplore.ieee.org/abstract/document/10183654}, doi = {10.1109/TRO.2023.3290449}, year = {2023}, date = {2023-07-13}, journal = {IEEE Transactions on Robotics}, volume = {30}, number = {5}, pages = {4114 - 4130}, abstract = {Multi-Robot and Multi-Agent Systems demonstrate collective (swarm) intelligence through systematic and distributed integration of local behaviors in a group. Agents sharing knowledge about the mission and environment can enhance performance at individual and mission levels. However, this is difficult to achieve, partly due to the lack of a generic framework for transferring part of the known knowledge (behaviors) between agents. This paper presents a new knowledge representation framework and a transfer strategy called KT-BT: Knowledge Transfer through Behavior Trees. The KT-BT framework follows a query-response-update mechanism through an online Behavior Tree framework, where agents broadcast queries for unknown conditions and respond with appropriate knowledge using a condition-action-control sub-flow. We embed a novel grammar structure called stringBT that encodes knowledge, enabling behavior sharing. We theoretically investigate the properties of the KT-BT framework in achieving homogeneity of high knowledge across the entire group compared to a heterogeneous system without the capability of sharing their knowledge. We extensively verify our framework in a simulated multi-robot search and rescue problem. The results show successful knowledge transfers and improved group performance in various scenarios. We further study the effects of opportunities and communication range on group performance, knowledge spread, and functional heterogeneity in a group of agents, presenting interesting insights.}, keywords = {autonomy, behavior-trees, heterogeneity, multi-robot, planning}, pubstate = {published}, tppubtype = {article} } Multi-Robot and Multi-Agent Systems demonstrate collective (swarm) intelligence through systematic and distributed integration of local behaviors in a group. Agents sharing knowledge about the mission and environment can enhance performance at individual and mission levels. However, this is difficult to achieve, partly due to the lack of a generic framework for transferring part of the known knowledge (behaviors) between agents. This paper presents a new knowledge representation framework and a transfer strategy called KT-BT: Knowledge Transfer through Behavior Trees. The KT-BT framework follows a query-response-update mechanism through an online Behavior Tree framework, where agents broadcast queries for unknown conditions and respond with appropriate knowledge using a condition-action-control sub-flow. We embed a novel grammar structure called stringBT that encodes knowledge, enabling behavior sharing. We theoretically investigate the properties of the KT-BT framework in achieving homogeneity of high knowledge across the entire group compared to a heterogeneous system without the capability of sharing their knowledge. We extensively verify our framework in a simulated multi-robot search and rescue problem. The results show successful knowledge transfers and improved group performance in various scenarios. We further study the effects of opportunities and communication range on group performance, knowledge spread, and functional heterogeneity in a group of agents, presenting interesting insights. |

| Mobile Robot Control and Autonomy Through Collaborative Twin Conference 2023 IEEE PerCom - International Conference on Pervasive Computing and Communications Workshops and other Affiliated Events, 2023. Abstract | Links | BibTeX | Tags: autonomy, cooperation, networking @conference{Tahir2023, title = {Mobile Robot Control and Autonomy Through Collaborative Twin}, author = {Nazish Tahir and Ramviyas Parasuraman}, doi = { 10.1109/PerComWorkshops56833.2023.10150325}, year = {2023}, date = {2023-03-17}, booktitle = {2023 IEEE PerCom - International Conference on Pervasive Computing and Communications Workshops and other Affiliated Events}, abstract = {When a mobile robot lacks high onboard computing or networking capabilities, it can rely on remote computing architecture for its control and autonomy. In this paper, we introduce a novel collaborative twin strategy for control and autonomy on resource-constrained robots. The practical implementation of such a strategy entails a mobile robot system divided into a cyber (simulated) and physical (real) space separated over a communication channel where the physical robot resides on the site of operation guided by a simulated autonomous agent from a remote location maintained over a network. Building on top of the digital twin concept, our collaboration twin is capable of autonomous navigation through an advanced SLAM-based path planning algorithm, while the physical robot is capable of tracking the Simulated twin's velocity and communicating feedback generated through interaction with its environment. We proposed a prioritized path planning application to the test in a collaborative teleoperation system of a physical robot guided by Simulation Twin's autonomous navigation. We examine the performance of a physical robot led by autonomous navigation from the Collaborative Twin and assisted by a predicted force received from the physical robot. The experimental findings indicate the practicality of the proposed simulation-physical twinning approach and provide computational and network performance improvements compared to typical remote computing and digital twin approaches.}, keywords = {autonomy, cooperation, networking}, pubstate = {published}, tppubtype = {conference} } When a mobile robot lacks high onboard computing or networking capabilities, it can rely on remote computing architecture for its control and autonomy. In this paper, we introduce a novel collaborative twin strategy for control and autonomy on resource-constrained robots. The practical implementation of such a strategy entails a mobile robot system divided into a cyber (simulated) and physical (real) space separated over a communication channel where the physical robot resides on the site of operation guided by a simulated autonomous agent from a remote location maintained over a network. Building on top of the digital twin concept, our collaboration twin is capable of autonomous navigation through an advanced SLAM-based path planning algorithm, while the physical robot is capable of tracking the Simulated twin's velocity and communicating feedback generated through interaction with its environment. We proposed a prioritized path planning application to the test in a collaborative teleoperation system of a physical robot guided by Simulation Twin's autonomous navigation. We examine the performance of a physical robot led by autonomous navigation from the Collaborative Twin and assisted by a predicted force received from the physical robot. The experimental findings indicate the practicality of the proposed simulation-physical twinning approach and provide computational and network performance improvements compared to typical remote computing and digital twin approaches. |

| A Strategy-Oriented Bayesian Soft Actor-Critic Model Conference Procedia Computer Science, 220 , ANT 2023 Elsevier, 2023. Abstract | Links | BibTeX | Tags: autonomy, learning @conference{Yang2023b, title = {A Strategy-Oriented Bayesian Soft Actor-Critic Model}, author = {Qin Yang and Ramviyas Parasuraman}, url = {https://www.sciencedirect.com/science/article/pii/S1877050923006063}, doi = {10.1016/j.procs.2023.03.071}, year = {2023}, date = {2023-03-17}, booktitle = {Procedia Computer Science}, journal = {Procedia Computer Science}, volume = {220}, pages = {561-566}, publisher = {Elsevier}, series = {ANT 2023}, abstract = {Adopting reasonable strategies is challenging but crucial for an intelligent agent with limited resources working in hazardous, unstructured, and dynamic environments to improve the system's utility, decrease the overall cost, and increase mission success probability. This paper proposes a novel hierarchical strategy decomposition approach based on the Bayesian chain rule to separate an intricate policy into several simple sub-policies and organize their relationships as Bayesian strategy networks (BSN). We integrate this approach into the state-of-the-art DRL method – soft actor-critic (SAC) and build the corresponding Bayesian soft actor-critic (BSAC) model by organizing several sub-policies as a joint policy. We compare the proposed BSAC method with the SAC and other state-of-the-art approaches such as TD3, DDPG, and PPO on the standard continuous control benchmarks – Hopper-v2, Walker2d-v2, and Humanoid-v2 – in MuJoCo with the OpenAI Gym environment. The results demonstrate that the promising potential of the BSAC method significantly improves training efficiency.}, keywords = {autonomy, learning}, pubstate = {published}, tppubtype = {conference} } Adopting reasonable strategies is challenging but crucial for an intelligent agent with limited resources working in hazardous, unstructured, and dynamic environments to improve the system's utility, decrease the overall cost, and increase mission success probability. This paper proposes a novel hierarchical strategy decomposition approach based on the Bayesian chain rule to separate an intricate policy into several simple sub-policies and organize their relationships as Bayesian strategy networks (BSN). We integrate this approach into the state-of-the-art DRL method – soft actor-critic (SAC) and build the corresponding Bayesian soft actor-critic (BSAC) model by organizing several sub-policies as a joint policy. We compare the proposed BSAC method with the SAC and other state-of-the-art approaches such as TD3, DDPG, and PPO on the standard continuous control benchmarks – Hopper-v2, Walker2d-v2, and Humanoid-v2 – in MuJoCo with the OpenAI Gym environment. The results demonstrate that the promising potential of the BSAC method significantly improves training efficiency. |

2022 |

|

| Sharing Autonomy of Exploration and Exploitation via Control Interface Workshop ICRA 2022 Workshop on Shared Autonomy in Physical Human-Robot Interaction: Adaptability and Trust, 2022. Abstract | Links | BibTeX | Tags: autonomy, human-robot interface, trust @workshop{Munir2022, title = {Sharing Autonomy of Exploration and Exploitation via Control Interface}, author = {Aiman Munir and Ramviyas Parasuraman}, url = {https://sites.google.com/view/saphri-icra2022/contributions}, year = {2022}, date = {2022-05-23}, booktitle = {ICRA 2022 Workshop on Shared Autonomy in Physical Human-Robot Interaction: Adaptability and Trust}, abstract = {Shared autonomy is a control paradigm that refers to the adaptation of a robot’s autonomy level in dynamic environments while taking human intentions and status into account at the same time. Here, the autonomy level can be changed based on internal/external information and human input. However, there are no clear guidelines and studies that help understand ”when” should a robot adapt its autonomy level to different functionalities. Therefore, in this paper, we create a framework that helps to improve the human-robot control interface by allowing humans to adapt to the robots’ autonomy level as well as to create a study design to gather insights into human’s preference to switch autonomy levels based on the current situation. We create two high-level strategies - Exploration to gather more data and Exploitation to make use of current data - for a search and rescue task. These two strategies can be achieved with human inputs or autonomous algorithms. We intend to understand the human preferences to the autonomy levels (and ”when” they want to switch) to these two strategies. The analysis is expected to provide insights into designing shared autonomy schemes and algorithms to consider human preferences in adaptively using autonomy levels of certain high-level strategies.}, keywords = {autonomy, human-robot interface, trust}, pubstate = {published}, tppubtype = {workshop} } Shared autonomy is a control paradigm that refers to the adaptation of a robot’s autonomy level in dynamic environments while taking human intentions and status into account at the same time. Here, the autonomy level can be changed based on internal/external information and human input. However, there are no clear guidelines and studies that help understand ”when” should a robot adapt its autonomy level to different functionalities. Therefore, in this paper, we create a framework that helps to improve the human-robot control interface by allowing humans to adapt to the robots’ autonomy level as well as to create a study design to gather insights into human’s preference to switch autonomy levels based on the current situation. We create two high-level strategies - Exploration to gather more data and Exploitation to make use of current data - for a search and rescue task. These two strategies can be achieved with human inputs or autonomous algorithms. We intend to understand the human preferences to the autonomy levels (and ”when” they want to switch) to these two strategies. The analysis is expected to provide insights into designing shared autonomy schemes and algorithms to consider human preferences in adaptively using autonomy levels of certain high-level strategies. |

Publications

2026 |

|

| Imitation-BT: Automating Behavior Tree Generation by Echoing Reinforcement Learning Agents Conference Forthcoming 2026 IEEE International Conference on Robotics & Automation (ICRA), Forthcoming. |

2025 |

|

| Autonomous Navigation of a Quadruped Robot to Approach Floor Eggs and Path Optimization Analysis for Commercial Feasibility Journal Article American Society of Agricultural and Biological Engineers, 41 (6), pp. 733-747, 2025. |

| Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario Conference 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025. |

| H-Cov: Multi-UAV Sensor Coverage with Altitude Optimization for Target Tracking Workshop IEEE ICRA 2025 Workshop on 25 YEARS OF AERIAL ROBOTICS: CHALLENGES AND OPPORTUNITIES, 2025. |

2024 |

|

| PhysicsAssistant: An LLM-Powered Interactive Learning Robot for Physics Lab Investigations Workshop IEEE ICRA 2024 Workshop on Accelerating Discovery in Natural Science Laboratories with AI and Robotics, 2024, (Selected for the Pioneer Award). |

2023 |

|

| KT-BT: A Framework for Knowledge Transfer Through Behavior Trees in Multi-Robot Systems Journal Article IEEE Transactions on Robotics, 30 (5), pp. 4114 - 4130, 2023. |

| Mobile Robot Control and Autonomy Through Collaborative Twin Conference 2023 IEEE PerCom - International Conference on Pervasive Computing and Communications Workshops and other Affiliated Events, 2023. |

| A Strategy-Oriented Bayesian Soft Actor-Critic Model Conference Procedia Computer Science, 220 , ANT 2023 Elsevier, 2023. |

2022 |

|

| Sharing Autonomy of Exploration and Exploitation via Control Interface Workshop ICRA 2022 Workshop on Shared Autonomy in Physical Human-Robot Interaction: Adaptability and Trust, 2022. |