2025 |

|

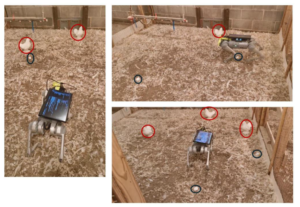

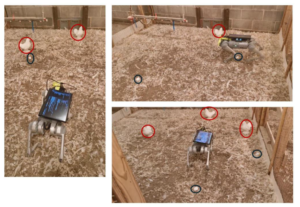

| Autonomous Navigation of a Quadruped Robot to Approach Floor Eggs and Path Optimization Analysis for Commercial Feasibility Journal Article American Society of Agricultural and Biological Engineers, 41 (6), pp. 733-747, 2025. Abstract | Links | BibTeX | Tags: autonomy, control, navigation, perception, planning @article{Mandiga2025, title = {Autonomous Navigation of a Quadruped Robot to Approach Floor Eggs and Path Optimization Analysis for Commercial Feasibility}, author = {Aravind Mandiga, Guoming Li, Tianming Liu, Ramviyas Parasuraman, Ramana M Pidaparti, Venkat UC Bodempudi, and Samuel E Aggrey}, url = {https://elibrary.asabe.org/abstract.asp?AID=55713}, doi = {10.13031/aea.16384}, year = {2025}, date = {2025-01-01}, journal = {American Society of Agricultural and Biological Engineers}, volume = {41}, number = {6}, pages = {733-747}, abstract = { Floor eggs (i.e., eggs laid on the litter floor) are a major problem in cage-free hen systems and account for approximately 5% to 6% of daily egg production. Floor eggs may be contaminated and pecked by birds, which can induce egg eating, degradation of egg quality, and risk of additional floor eggs if not collected in a timely manner. Currently, floor eggs require time-consuming manual collection in daily flock inspection. The objective was to develop autonomous navigation for a quadruped robot to approach floor eggs and to evaluate commercial feasibility through optimized routing strategies. The robot was equipped with an RGB-Depth camera for object detection and depth estimation, and multiple deep learning object detection models were evaluated. Mathematical operations associated with imagery coordinates are converted to real-world trajectories for robot movement controls. The robot was tested at speeds of 0.27, 0.34, 0.41, 0.52, and 0.68 m/s to approach floor eggs. Results show the model successfully localizes floor eggs and hens with over 95% precision, recall, and mAP50(B). The robot approaches floor eggs with an average accuracy of 90%. Commercial feasibility was assessed through mathematical optimization analysis using boustrophedon cellular decomposition for two payload scenarios (50 and 77 eggs) in a typical 50,000-hen facility (380 x 18.2 m). Optimization analysis demonstrated operational viability with total daily travel distances of 10.7 km (50-egg payload) and 7.8 km (77-egg payload) for seven daily charge cycles, successfully transferring 2,000 floor eggs to the conveyor belts. These findings show great potential for quadruped robot navigation and commercial implementation for floor egg collection.}, keywords = {autonomy, control, navigation, perception, planning}, pubstate = {published}, tppubtype = {article} } Floor eggs (i.e., eggs laid on the litter floor) are a major problem in cage-free hen systems and account for approximately 5% to 6% of daily egg production. Floor eggs may be contaminated and pecked by birds, which can induce egg eating, degradation of egg quality, and risk of additional floor eggs if not collected in a timely manner. Currently, floor eggs require time-consuming manual collection in daily flock inspection. The objective was to develop autonomous navigation for a quadruped robot to approach floor eggs and to evaluate commercial feasibility through optimized routing strategies. The robot was equipped with an RGB-Depth camera for object detection and depth estimation, and multiple deep learning object detection models were evaluated. Mathematical operations associated with imagery coordinates are converted to real-world trajectories for robot movement controls. The robot was tested at speeds of 0.27, 0.34, 0.41, 0.52, and 0.68 m/s to approach floor eggs. Results show the model successfully localizes floor eggs and hens with over 95% precision, recall, and mAP50(B). The robot approaches floor eggs with an average accuracy of 90%. Commercial feasibility was assessed through mathematical optimization analysis using boustrophedon cellular decomposition for two payload scenarios (50 and 77 eggs) in a typical 50,000-hen facility (380 x 18.2 m). Optimization analysis demonstrated operational viability with total daily travel distances of 10.7 km (50-egg payload) and 7.8 km (77-egg payload) for seven daily charge cycles, successfully transferring 2,000 floor eggs to the conveyor belts. These findings show great potential for quadruped robot navigation and commercial implementation for floor egg collection. |

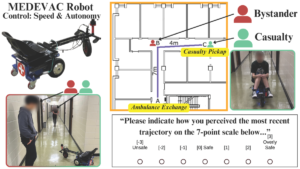

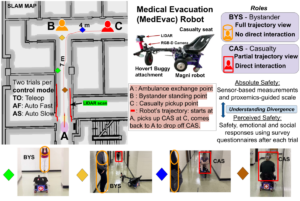

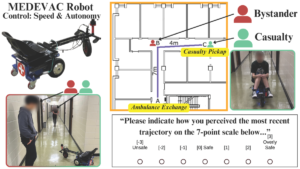

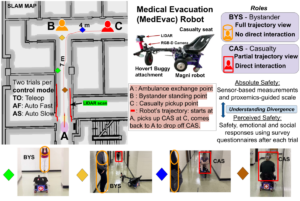

| Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario Conference 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025. Abstract | Links | BibTeX | Tags: autonomy, human-robot interaction, navigation, trust @conference{Goodie2025, title = {Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario}, author = {Tyson Jordan; Pranav Pandey; Prashant Doshi; Ramviyas Parasuraman; Adam Goodie}, url = {https://ieeexplore.ieee.org/document/11246558}, doi = {10.1109/IROS60139.2025.11246558}, year = {2025}, date = {2025-10-19}, booktitle = {2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)}, abstract = {The use of autonomous systems in medical evacuation (MEDEVAC) scenarios is promising, but existing implementations overlook key insights from human-robot interaction (HRI) research. Studies on human-machine teams demonstrate that human perceptions of a machine teammate are critical in governing the machine’s performance. Consequently, it is essential to identify the factors that contribute to positive human perceptions in human-machine teams. Here, we present a mixed factorial design to assess human perceptions of a MEDEVAC robot in a simulated evacuation scenario. Participants were assigned to the role of casualty (CAS) or bystander (BYS) and subjected to three within-subjects conditions based on the MEDEVAC robot’s operating mode: autonomous-slow (AS), autonomous-fast (AF), and teleoperation (TO). During each trial, a MEDEVAC robot navigated an 11-meter path, acquiring a casualty and transporting them to an ambulance exchange point while avoiding an idle bystander. Following each trial, subjects completed a questionnaire measuring their emotional states, perceived safety, and social compatibility with the robot. Results indicate a consistent main effect of operating mode on reported emotional states and perceived safety. Pairwise analyses suggest that the employment of the AF operating mode negatively impacted perceptions along these dimensions. There were no persistent differences between CAS and BYS responses. }, keywords = {autonomy, human-robot interaction, navigation, trust}, pubstate = {published}, tppubtype = {conference} } The use of autonomous systems in medical evacuation (MEDEVAC) scenarios is promising, but existing implementations overlook key insights from human-robot interaction (HRI) research. Studies on human-machine teams demonstrate that human perceptions of a machine teammate are critical in governing the machine’s performance. Consequently, it is essential to identify the factors that contribute to positive human perceptions in human-machine teams. Here, we present a mixed factorial design to assess human perceptions of a MEDEVAC robot in a simulated evacuation scenario. Participants were assigned to the role of casualty (CAS) or bystander (BYS) and subjected to three within-subjects conditions based on the MEDEVAC robot’s operating mode: autonomous-slow (AS), autonomous-fast (AF), and teleoperation (TO). During each trial, a MEDEVAC robot navigated an 11-meter path, acquiring a casualty and transporting them to an ambulance exchange point while avoiding an idle bystander. Following each trial, subjects completed a questionnaire measuring their emotional states, perceived safety, and social compatibility with the robot. Results indicate a consistent main effect of operating mode on reported emotional states and perceived safety. Pairwise analyses suggest that the employment of the AF operating mode negatively impacted perceptions along these dimensions. There were no persistent differences between CAS and BYS responses. |

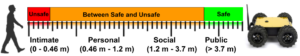

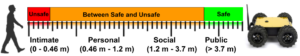

| Integrating Perceptions: A Human-Centered Physical Safety Model for Human-Robot Interaction Conference 2025 34th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), 2025. Abstract | Links | BibTeX | Tags: cooperation, human-robot interaction, navigation, trust @conference{Pandey2025, title = {Integrating Perceptions: A Human-Centered Physical Safety Model for Human-Robot Interaction}, author = {Pranav Kumar Pandey, Ramviyas Parasuraman, and Prashant Doshi}, url = {https://ieeexplore.ieee.org/document/11217747}, doi = {10.1109/RO-MAN63969.2025.11217747}, year = {2025}, date = {2025-08-25}, booktitle = {2025 34th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN)}, abstract = {Ensuring safety in human-robot interaction (HRI) is essential to foster user trust and enable the broader adoption of robotic systems. Traditional safety models primarily rely on sensor-based measures, such as relative distance and velocity, to assess physical safety. However, these models often fail to capture subjective safety perceptions, which are shaped by individual traits and contextual factors. In this paper, we introduce and analyze a parameterized general safety model that bridges the gap between physical and perceived safety by incorporating a personalization parameter, ρ, into the safety measurement framework to account for individual differences in safety perception. Through a series of hypothesis-driven human-subject studies in a simulated rescue scenario, we investigate how emotional state, trust, and robot behavior influence perceived safety. Our results show that ρ effectively captures meaningful individual differences, driven by affective responses, trust in task consistency, and clustering into distinct user types. Specifically, our findings confirm that predictable and consistent robot behavior as well as the elicitation of positive emotional states, significantly enhance perceived safety. Moreover, responses cluster into a small number of user types, supporting adaptive personalization based on shared safety models. Notably, participant role significantly shapes safety perception, and repeated exposure reduces perceived safety for participants in the casualty role, emphasizing the impact of physical interaction and experiential change. These findings highlight the importance of adaptive, human-centered safety models that integrate both psychological and behavioral dimensions, offering a pathway toward more trustworthy and effective HRI in safety-critical domains. }, keywords = {cooperation, human-robot interaction, navigation, trust}, pubstate = {published}, tppubtype = {conference} } Ensuring safety in human-robot interaction (HRI) is essential to foster user trust and enable the broader adoption of robotic systems. Traditional safety models primarily rely on sensor-based measures, such as relative distance and velocity, to assess physical safety. However, these models often fail to capture subjective safety perceptions, which are shaped by individual traits and contextual factors. In this paper, we introduce and analyze a parameterized general safety model that bridges the gap between physical and perceived safety by incorporating a personalization parameter, ρ, into the safety measurement framework to account for individual differences in safety perception. Through a series of hypothesis-driven human-subject studies in a simulated rescue scenario, we investigate how emotional state, trust, and robot behavior influence perceived safety. Our results show that ρ effectively captures meaningful individual differences, driven by affective responses, trust in task consistency, and clustering into distinct user types. Specifically, our findings confirm that predictable and consistent robot behavior as well as the elicitation of positive emotional states, significantly enhance perceived safety. Moreover, responses cluster into a small number of user types, supporting adaptive personalization based on shared safety models. Notably, participant role significantly shapes safety perception, and repeated exposure reduces perceived safety for participants in the casualty role, emphasizing the impact of physical interaction and experiential change. These findings highlight the importance of adaptive, human-centered safety models that integrate both psychological and behavioral dimensions, offering a pathway toward more trustworthy and effective HRI in safety-critical domains. |

| GSI- A Proxemics-Guided Generalized Safety Metric For Evaluating Safety in Social Navigation Context Workshop IEEE ICRA 2025 Workshop on Advances in Social Navigation: Planning, HRI and Beyond, 2025, (Received Best Poster Award.). Links | BibTeX | Tags: human-robot interaction, navigation, trust @workshop{Pandey2025b, title = {GSI- A Proxemics-Guided Generalized Safety Metric For Evaluating Safety in Social Navigation Context}, author = {Pranav Pandey, Ramviyas Parasuraman, and Prashant Doshi.}, url = {https://socialnav2025.pages.dev/papers/GSI-%20A%20Proxemics-Guided%20Generalized%20Safety%20Metric%20For%20Evaluating%20Safety%20in%20Social%20Navigation%20Context.pdf}, year = {2025}, date = {2025-05-19}, booktitle = {IEEE ICRA 2025 Workshop on Advances in Social Navigation: Planning, HRI and Beyond}, note = {Received Best Poster Award.}, keywords = {human-robot interaction, navigation, trust}, pubstate = {published}, tppubtype = {workshop} } |

Publications

2025 |

|

| Autonomous Navigation of a Quadruped Robot to Approach Floor Eggs and Path Optimization Analysis for Commercial Feasibility Journal Article American Society of Agricultural and Biological Engineers, 41 (6), pp. 733-747, 2025. |

| Analyzing Human Perceptions of a MEDEVAC Robot in a Simulated Evacuation Scenario Conference 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2025. |

| Integrating Perceptions: A Human-Centered Physical Safety Model for Human-Robot Interaction Conference 2025 34th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), 2025. |

| GSI- A Proxemics-Guided Generalized Safety Metric For Evaluating Safety in Social Navigation Context Workshop IEEE ICRA 2025 Workshop on Advances in Social Navigation: Planning, HRI and Beyond, 2025, (Received Best Poster Award.). |